By the time you finish this story, you’ll have predicted the future dozens of times. You’ve already guessed from the headline what it’s about and whether you’ll enjoy it. These opening words help you judge whether the rest is worth bothering with. And if you expect it will mention the oracle of Delphi, Nancy Reagan’s astrologer, and chimpanzees playing darts, you’ve already got three things right.

We are all forecasters. We all want to know what is going to happen next. Will I get COVID-19? Will I have a job in three months’ time? Will the shops have what I need? Will I have time to finish my project? Will Donald Trump be re-elected President of the United States?

Weekly Newsletter

Yet although we regularly predict the outcomes of questions like these, we are often not very good at doing so. People tend to “believe that their futures will be better than can possibly be true,” according to a paper by a team of psychologists that included Neil Weinstein of Rutgers University, the first modern psychologist to study “unrealistic optimism,” as he termed it. The authors write:

This bias toward favorable outcomes… appears for a wide variety of negative events, including diseases such as cancer, natural disasters such as earthquakes and a host of other events ranging from unwanted pregnancies and radon contamination to the end of a romantic relationship. It also emerges, albeit less strongly, for positive events, such as graduating from college, getting married and having favorable medical outcomes.

Our poor ability to predict future events is why we turn to prediction experts: meteorologists, economists, psephologists (quantitative predictors of elections), insurers, doctors, and investment fund managers. Some are scientific; others are not. Nancy Reagan hired an astrologer, Joan Quigley, to screen Ronald Reagan’s schedule of public appearances according to his horoscope, allegedly in an effort to avoid assassination attempts. We hope these modern oracles can see what’s coming and help us prepare for the future.

This is another mistake, according to a psychologist whose name many forecasting afficionados will have no doubt foreseen: Philip Tetlock, of the University of Pennsylvania. Experts, Tetlock said in his 2006 book Expert Political Judgment, are about as accurate as “dart-throwing chimps.”

His critique is that experts tend to be wedded to one particular big idea, which causes them to fail to see the full picture. Think of Irving Fisher, the most famous American economist of the 1920s, a contemporary and rival of John Maynard Keynes. Fisher is notorious for announcing, in 1929, that stock prices had reached a “permanently high plateau” just a few days before the Wall Street Crash. Fisher was so convinced of his theory that he continued to say stocks would rebound for months afterwards.

In fact, Tetlock found, some people can predict the future pretty well: people with a reasonable level of intelligence who search for information, change their minds when the evidence changes, and think of possibilities rather than certainties.

The “acid test” of his theory came when the Intelligence Advanced Research Projects Activity (IARPA) sponsored a forecasting tournament. Five university groups competed to predict geopolitical events, and Tetlock’s team won, by discovering and recruiting an army of forecasters, then creaming off the best of the crop as “superforecasters.” According to his research, these people are in the top 2% of prediction-makers: they make their forecasts sooner than everyone else and are more likely to be right.

No wonder that corporations, governments, and influential people such as Dominic Cummings, the architect of Brexit and chief advisor to Boris Johnson, want to tap into their predictive powers. But it’s hardly the first time the powerful have turned to futurists for help.

* * *

The sanctuary of Delphi, on the mountainside of Mount Parnassus in Greece, has been a byword for prediction ever since Croesus, the king of Lydia, conducted a classical version of IARPA’s experiment sometime in the early sixth century BCE. Pondering whether he should go to war with the expansionist Persians, Croesus sought some trusted advice. He sent envoys to the most important oracles in the known world with a test to see which was the most accurate. Exactly 100 days after their departure from the Lydian capital of Sardis—its ruins are about 250 miles south of Istanbul— the envoys were told to ask the oracles what Croesus was doing that day. The answers of the others were lost to the past, according to Herodotus, but the priestess at Delphi divined, apparently with the help of Apollo, the god of prophecy, that Croesus was cooking lamb and tortoise in a bronze pot with a bronze lid.

Could a modern superforecaster perform the same trick? Perhaps not. Although… is it really that much of a stretch to predict a king’s meal would be prepared in an ornate pot and involve expensive or exotic ingredients? Maybe one of the priestess’s cousins was a tortoise exporter? Perhaps Croesus was a noted tortoise gourmand?

Yet the secret to modern forecasting does lie partly in Croesus’s method of using lots of oracles at once. A well-known example comes from Francis Galton, a statistician and anthropologist—and the inventor of eugenics. In 1907, Galton published a paper about a “guess the weight of the ox” competition at a livestock fair in the southwestern English city of Plymouth. Galton acquired all the entry cards and examined them:

He found that “these afforded excellent material. The judgments were unbiassed by passion… The sixpenny [entry] fee deterred practical joking, and the hope of a prize and the joy of competition prompted each competitor to do his best. The competitors included butchers and farmers, some of whom were highly expert in judging the weight of cattle.”

The mean of the 787 entries was 1,197 pounds—a single pound less than the ox’s true weight.

The idea that a crowd might be better than an individual was not seriously considered again until 1969, when a paper by future Nobel Prize winner Clive Granger and his fellow economist J. M. Bates, both of the University of Nottingham, established that combining different forecasts was more accurate than trying to find the best one.

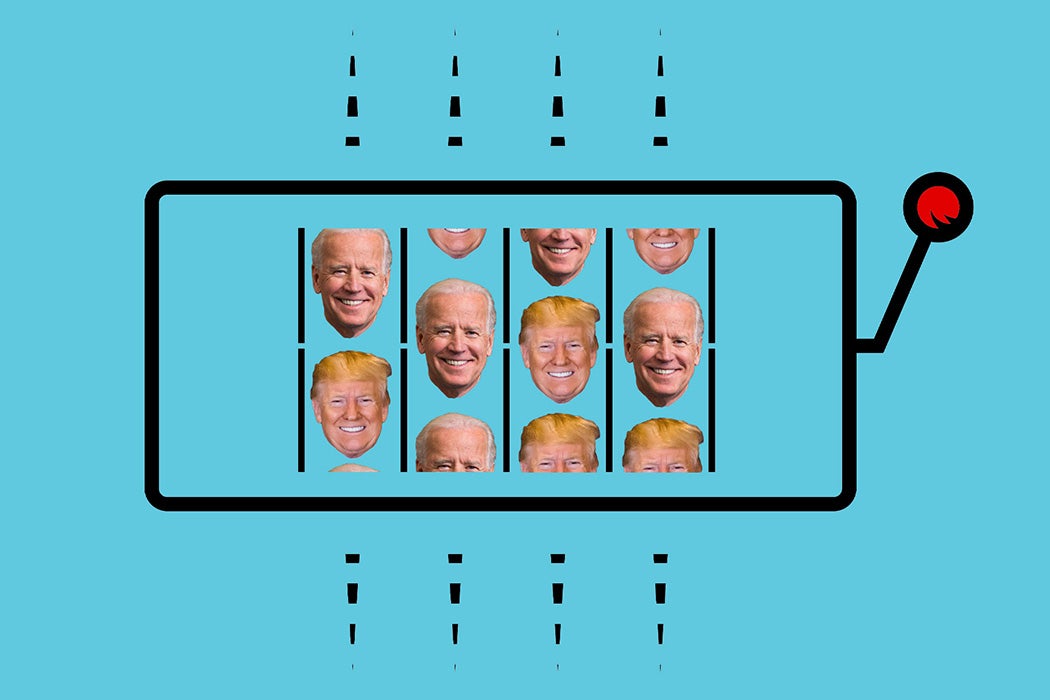

Those discoveries, combined with work by the economist Friedrich Hayek, were the foundation for prediction markets, effectively re-assembling people like Galton’s competition entrants with an interest in different subjects. The idea is to create a group of people who will make a testable prediction about an event, such as “Who will win the 2020 presidential election?” People in the market can buy and sell shares in predictions. PredictIt.org, which bills itself as “the stock market for politics,” is one such prediction market.

For example, if a trader believes shares in “Donald Trump will win the U.S. presidential election in 2020” are underpriced, they could buy them and hold them until election day. If Trump wins, the trader receives $1 for every share, though shares are purchased for less than $1, with prices approximating estimated probabilities of winning.

Prediction markets or information markets can be very accurate, as outlined by James Surowiecki in his book The Wisdom of Crowds. The Iowa Electronic Markets, set up for the 1988 presidential elections, was cited as proof that “prediction markets can work” by the Harvard Law Review in 2009:

In the week before presidential elections from 1988 to 2000, the IEM predictions were within 1.5 percentage points of the actual vote, an improvement upon the polls, which rely on self-reported plans to vote for a candidate and which have an error rate of over 1.9 percentage points.

Google, Yahoo!, Hewlett-Packard, Eli Lilly, Intel, Microsoft, and France Telecom have all used internal prediction markets to ask their employees about the likely success of new drugs, new products, future sales.

Who knows what might have happened if Croesus had formed a prediction market of all the ancient oracles. Instead he asked only the Delphic oracle and one other his next and most pressing question: should he attack Cyrus the Great? The answer, Herodotus says, came back that “if he should send an army against the Persians he would destroy a great empire”. Students of riddles and small print will see the problem instantly: Croesus went to war and lost everything. The great empire he destroyed was his own.

* * *

Although prediction markets can work well, they don’t always. IEM, PredictIt, and the other online markets were wrong about Brexit, and they were wrong about Trump’s win in 2016. As the Harvard Law Review points out, they were also wrong about finding weapons of mass destruction in Iraq in 2003, and the nomination of John Roberts to the U.S. Supreme Court in 2005. There are also plenty of examples of small groups reinforcing each other’s moderate views to reach an extreme position, otherwise known as groupthink, a theory devised by Yale psychologist Irving Janis and used to explain the Bay of Pigs invasion.

The weakness of prediction markets is that no one knows if the participants are simply gambling on a hunch or if they have solid reasoning for their trade, and although thoughtful traders should ultimately drive the price, that doesn’t always happen. The markets are also no less prone to being caught in an information bubble than British investors in the South Sea Company in 1720 or speculators during the tulip mania of the Dutch Republic in 1637.

Before prediction markets, when experts were still seen by most as the only realistic route to accurate forecasting, there was a different method: the Delphi technique, devised by the RAND Corporation during the early period of the Cold War as a way to move beyond the limitations of trend analysis. The Delphi technique began by convening a panel of experts, in isolation from each other. Each expert was asked individually to complete a questionnaire outlining their views on a topic. The answers were shared anonymously and the experts asked if they wanted to change their views. After several rounds of revision, the panel’s median view was taken as the consensus view of the future.

In theory, this method eliminated some of the problems associated with groupthink, while also ensuring that the experts had access to the entire range of high-quality, well-informed opinions. But in “Confessions of a Delphi Panelist,” John D. Long admitted that was not always the case, given his “dread at the prospect of doing the hard thinking demanded” by the 73 questions involved:

While I am baring the shortcomings of my character, I must also say that at various stages I was sorely tempted to take the easy way out and not be unduly concerned by the quality of my response. In more than one instance, I succumbed to this temptation.

Strong skepticism about the Delphi technique meant that it was swiftly overtaken when prediction markets arrived. If only there was a way to combine the hard thinking demanded by Delphi with participation in a prediction market.

And so we return to Philip Tetlock. His IARPA competition-winning team and the commercial incarnation of his research, the Good Judgment Project, combine prediction markets with hard thinking. At Good Judgment Open, which anyone can sign up to, predictions are not monetized as in a pure prediction market, but rewarded with social status. Forecasters are given a Brier score and ranked according to each prediction: points awarded according to whether they were correct, with early forecasts scoring better. They are also encouraged to explain each prediction, and regularly update them as new information comes in. The system delivers both the crowd’s prediction and, like the Delphi technique, allows forecasters to consider their own thinking in the light of other people’s.

Tetlock’s jibe about experts and dart-throwing chimpanzees has been overemphasised. Experts whose careers are built on their research are simply more likely to have a psychological need to defend their position, a cognitive bias. During the IARPA tournament, Tetlock’s research group put forecasters into teams to test their hypotheses on “the psychological drivers of accuracy,” and discovered four:

(a) recruitment and retention of better forecasters (accounting for roughly 10% of the advantage of GJP forecasters over those in other research programs);

(b) cognitive-debiasing training (accounting for about a 10% advantage of the training condition over the no-training condition);

(c) more engaging work environments, in the form of collaborative teamwork and prediction markets (accounting for a roughly 10% boost relative to forecasters working alone); and

(d) better statistical methods of distilling the wisdom of the crowd—and winnowing out the madness… which contributed an additional 35% boost above unweighted averaging of forecasts.

They also skimmed off the best forecasters into a team of superforecasters, who “performed superbly” and, far from being lucky once, improved their performances during the tournament. Tetlock’s advice for people who want to become better forecasters is to be more open-minded and attempt to strip out cognitive biases, like Neil Weinstein’s unrealistic optimism. He also identified “overpredicting change, creating incoherent scenarios” and “overconfidence, the confirmation bias and base-rate neglect.” There are many more, and Tetlock’s work indicates that overcoming them help individuals make better judgments than following the wisdom of crowds—or just flipping a coin.