On May 4, 1964, the United States Congress resolved that bourbon is a distinctly “national” product. Unsurprisingly, it was pair of politicians from the land of Maker’s Mark—Kentucky’s Senator Thruston Morton and Representative John Watts—who introduced this resolution; it called for a prohibition on the importation of anything designated as “Bourbon whiskey.” It was not the first time nor the last that arguments over bourbon’s ingredients and provenance entered the public fray.

Indeed, such debates began centuries earlier, before the American Revolution in the region that would become New England. In the seventeenth century, colonists there tried to distill everything from berries to pumpkins to corn. They also experimented with making rum, using molasses exported from the Caribbean, a pipeline that dried up after the Revolution effectively ending rum distillation in the North American colonies.

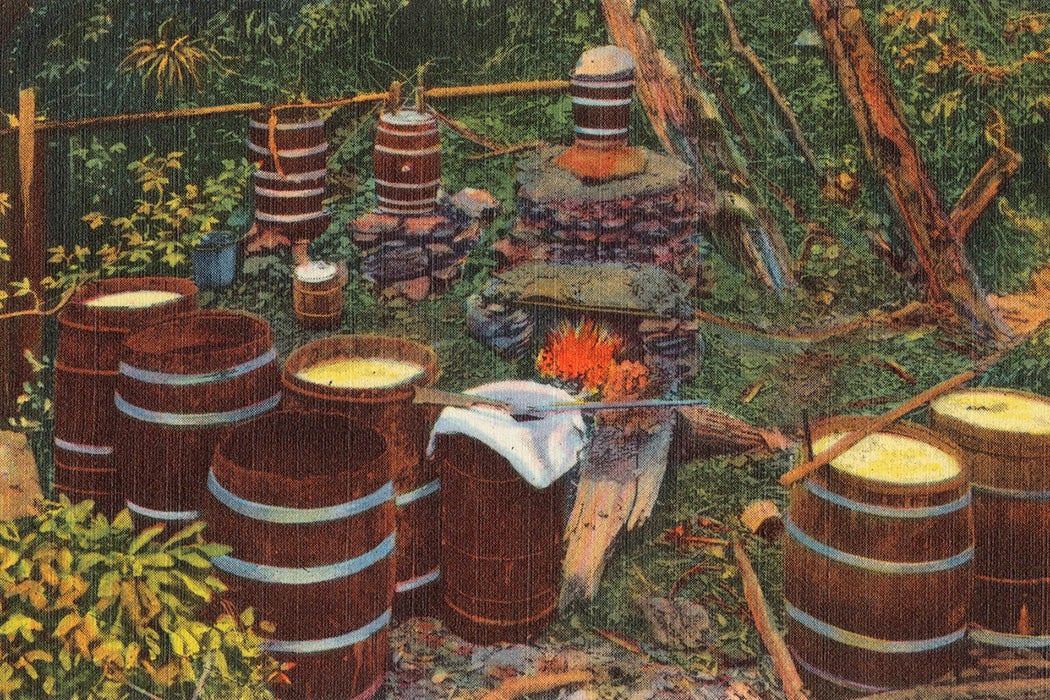

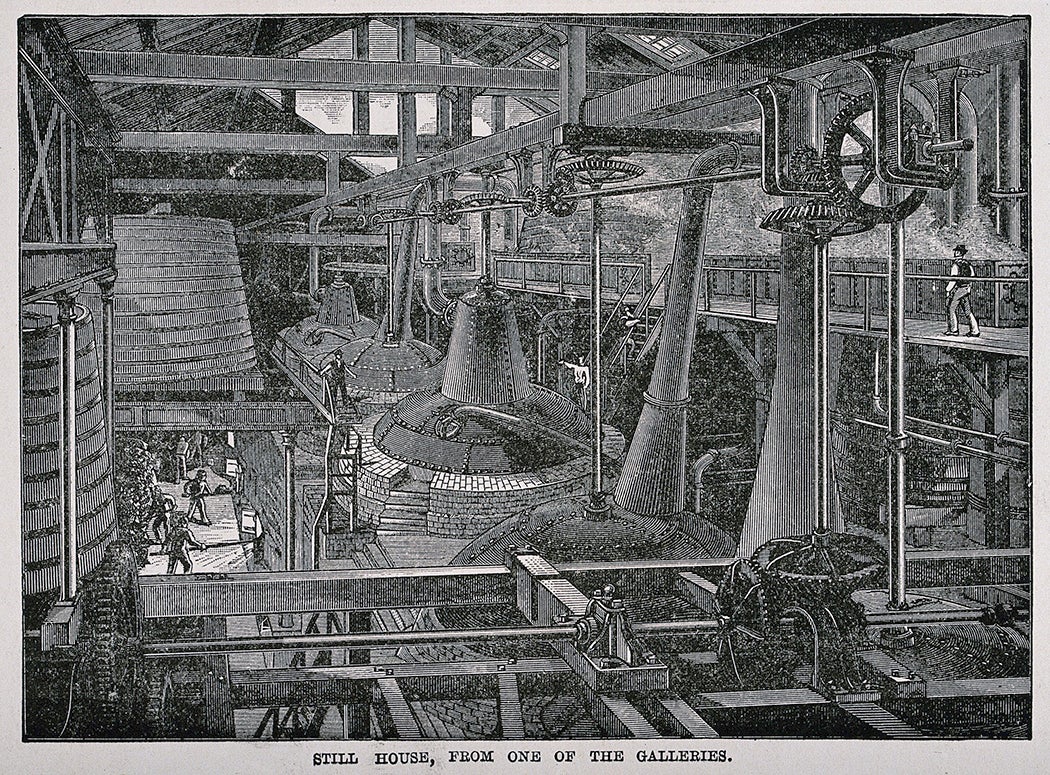

Distilling whiskey, as the colonists learned, is uncomplicated; there are but three ingredients: grain, water, and yeast. It is time, however, that fourth “metaphysical ingredient” that “completely changes whiskey’s taste,” observes journalist Clay Risen in Bourbon: The Story of Kentucky Whiskey. In American whiskey, the “mash bill” is the recipe each distiller uses as the basis of their brew—the percentages of corn, rye, wheat, or barley. After it is mixed with water and yeast (which produces the alcohol), it is run through a still. At this point, the liquid is a clear liquor and is not yet considered whiskey. Poured into barrels, this liquor then is left to age and mix with both oxygen and chemicals in the wood, turning what was clear into a drink the color of gold.

Weekly Newsletter

From the very start of US history, whiskey played a key role in shaping American consumption habits and its political and legal culture. The end of the eighteenth century saw the settlement of a large number of Scots-Irish immigrants in Maryland and Pennsylvania. According to writer Seán S. McKeithan, these newcomers brought with them “the strongly held opinion that whiskey, not bread, was the staff of life, the equipment for distilling, and an expert knowledge of the distiller’s art.” Rye was a central crop for them—while corn “grew like wildfire in Kentucky.” Farmers around the country found it easy to distill, ship, and earn profits from whiskey made from surplus crops, and records from colonial legislatures nationwide reveal how pervasive such distilling was, contradicting the claims of Kentuckians that their own Elijah Craig was America’s first real “bourbon” distiller. Moreover, strong evidence identifies colonial Virginia—where corn was the primary crop—as its birthplace.

At the time, liquor was a “necessity of life,” writes historian Henry Crowgey in Kentucky Bourbon: The Early Years of Whiskeymaking; distilling it was akin to the “making of soap, the grinding of grain in a rude hand mill, or the tanning of animal pelts.” It satisfied a thirsty public. General George Washington insisted on liquor rations for soldiers, stating that “there should always be a Sufficient Quantity of Spirits with the Army” because “in many instances, such as when they are marching in hot or Cold weather, in Camp or Wet, on fatigue or in Working Parties, it is so essential that it is not to be dispensed with.”

The Tax That Started It All

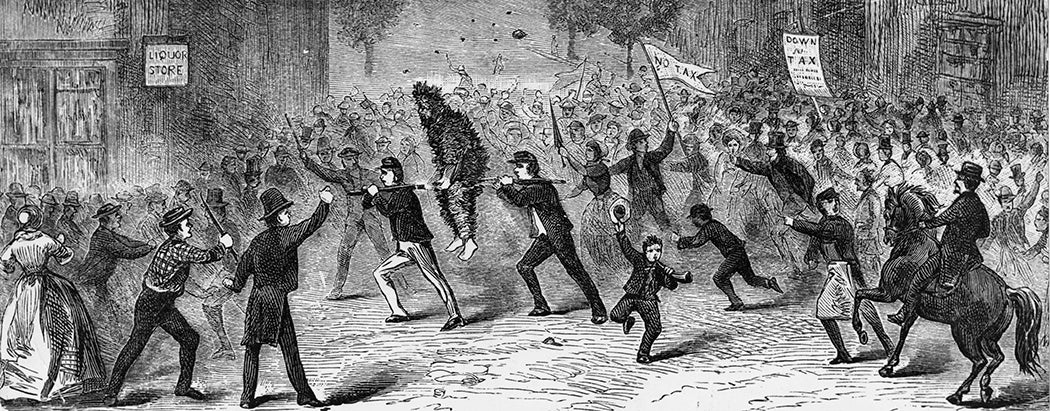

All interesting stories in American history begin with a levy. So too for whiskey. On March 3, 1791, Congress instituted an excise tax on domestic and imported alcohol. Many objected. Small scale farmers in western Pennsylvania—many of whom distilled excess grains into whiskey—were particularly galled, and refused to pay the tax. Their collective refusal became known as the Whiskey Rebellion.

These farmers believed “remote central government” passing this tax “would bring the nation to the brink of an ‘internal war,’” says historian Thomas Slaughter in his book The Whiskey Rebellion: Frontier Epilogue to the American Revolution. On this score they disagreed with settlers in the East, one more in a list of contentious issues dividing them. There was the federal government’s role in the lives of individuals; the national debt; Native American wars; slavery; and the economy. These were all divisive matters, and people living in the growing Western frontier, including those in Pennsylvania, held fast to a vision of the future based on individual economic and political autonomy. They resented the perceived excesses of the national government. And their numbers were growing.

In the 1780s the population in the West tripled as disgusted Easterners looked askance at “the amount of whiskey consumed,” says Slaughter, and “the uninhibited violence of those under its influence.” The whiskey tax was the breaking point. In 1794, Washington ordered his troops to end the rebellion. Though quashed, it foretold how fraught the relationship between vice and regulation would continue to be.

Consuming and Making Whiskey

The suppression of the Whiskey Rebellion did not stop its flow, even if it did contribute to a decrease in alcohol consumption during the Second Great Awakening (1790-1840) and the temperance movement. Indeed, throughout the nineteenth century and into the twentieth, Americans have tried—and failed—to control their liquor. Whiskey always prevails; its popularity grew alongside its cultural significance.

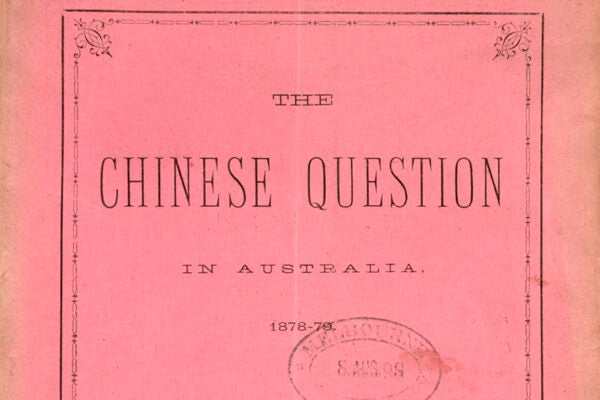

The American South offers a critical case in point. As distillation became more efficient in the nineteenth century, distillers shipped their goods throughout the region, and bourbon “began to settle into the collective consciousness and consumption patterns,” according to McKeithan. “Bourbon is of particular importance in the study of white southern masculinity because of its distinctly constructed white southern maleness,” he says, contrasting it with food production more generally, a domain that has “historically been the province of its women.”

The Whiskey Industry’s Development

In the late nineteenth century, distillers grew frustrated with the impact of market fluctuations on business. Enacted in 1812, a national excise tax on whiskey rose every few years. It was not, however, retroactive; anything a distiller produced before the hike could be taxed at the previous, lower rate. This led to cycles of over and under supply, as distillers haphazardly decided whether to overproduce to avoid larger bills in the future.

By the 1870s, a group of distillers had had enough and agreed not to glut the market; they created an oligopoly. The Whiskey Trust began in 1887 in Peoria, Illinois, and producers in this trust made whiskey quite unlike what we know today; they added color and flavor to unaged spirits. The Whiskey Trust was short-lived; it was outlawed in 1890 with the passage of the Sherman Antitrust Act. In response, the Whiskey Trust incorporated itself, changing names several times over the twentieth century. It ended up as the National Distillers Products Company, one of the few entities controlling the liquor market; in the 1980s, Jim Beam acquired most of its brands.

The Federal Government Came Knocking

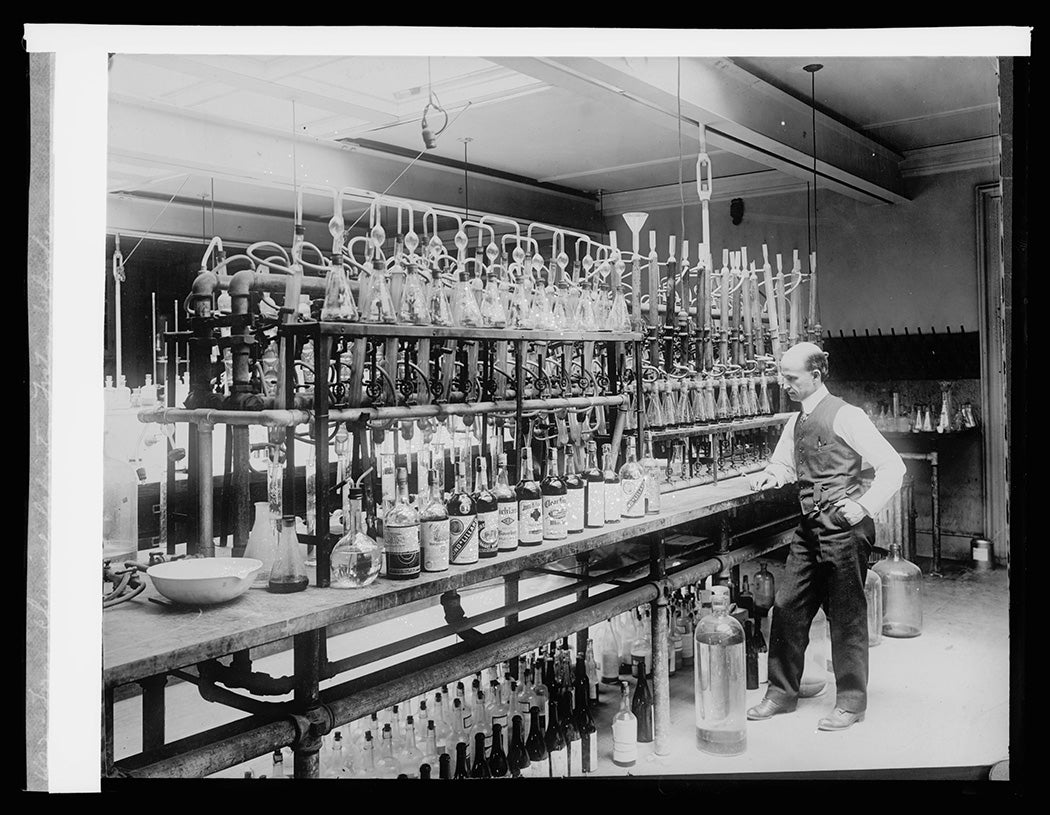

Settling on and regulating the taste and labelling of whiskey was a decades long battle. What today is recognized as bourbon only emerged after the Civil War. Until the late nineteenth century, most whiskey was “white”—meaning it was unaged, according to historian Gerald Carson. In some cases distillers added caramel to color it; these people were known as “rectifiers.” At the time, it was almost impossible for an American to know exactly what was in their bottles of whiskey. Rectifiers purchased whiskey from distillers and then changed the taste profiles. They were not required to label additional ingredients nor indicate where the original liquor came from.

The Bottled in Bond Act, passed in 1897, was the first legal attempt to regulate the taste and process of distilling whiskey. Six rules had to be heeded in order to obtain the “bottled in bond” label: distillation had to take place in a single distillery in one season; bottles had to be aged at least four years; they needed to be bottled at 100 proof; labels had to include the name of the distillery and bottling location; and water was the only permitted additive.

The Pure Food and Drug Act in 1906 put rectifiers at further risk. Chemist Harvey Washington Wiley, its creator, abhorred mislabeled and adulterated products, and his legislation represented an attempt to rid the whiskey market of everything he considered impure. The regulation required rectifiers to affix labels of “imitation whiskey,” a move that hurt business. Four years later, the law was amended; rectifiers could now label their product as “blended” or “compounded,” removing the “pejorative connotation” of imitation.

After the repeal of Prohibition in December 1933, regulation of the whiskey industry became even more important as a source of income for the federal government. In 1935, it created the Federal Alcohol Administration, a consumer protection agency, which imposed stricter rules about whiskey labelling. These rules stipulated distillers had to assert, among other things, the age of the whiskey—which determines whether a whiskey is “light” or “heavy-bodied;” the type of oak barrels it was stored in; and more.

Of course, when it comes to questions of a whiskey’s body, individual taste prevails. Some drinkers prefer light whiskies aged in reused barrels, like scotch. Others prefer the heavy-bodied taste of bourbon, which is required by law to be aged in new charred oak barrels. That said, the taste we associate with quintessential “American” whiskey—rich, sweet, and heavy—was rooted in this initial labelling regulation.

After Prohibition, bourbon was declared a distinctly American product, says McKeithan, a “drink of the working class.” Despite the growing demand for exotic, foreign liquors in the Sixties, Americans have returned to whiskey, and bourbon in particular. Today, bourbon is still a “politically charged substance,” and the “weight and gravity of Bourbon register both symbolically through the drink’s historical legacy and sensuously through its bite—the burn that makes learning to drink whiskey hard work and separates the proverbial men from the boys.”

An American woman of color, I don’t fit into the typical understanding of a modern bourbon drinker. Nevertheless, I feel the “heaviness” of bourbon with every sip. Literally because of its rich and sweet scent, but also symbolically, as McKeithan argues, because each distillery, each bottle, each glass—is intimately connected with the histories of American business, governmental regulation, and culture.

Over centuries, what made American whiskey “American” was determined by states and their regulations. Even though corporations control most of the market today—the nature of distilleries as a business model, alcohol as a good, and the role of the federal government—solidified bourbon’s current place in American taste preferences.

Conclusion

Corn and America have gone hand in hand since the country’s earliest days. In the late nineteenth and twentieth century, the legal requirements and regulations of whiskey were prescriptive and descriptive, and worked to differentiate “real” whiskey from “imitations.” These rules standardized a product and strengthened the position of distilleries as critical in developing American taste and culture. Since 2009, whiskey sales have surged at home and abroad, bolstered by small-batch, craft distillers. Economic and business historians have long held the importance of the government in the rise of American industry, and liquor is no exception. In this case, regulation and taxation not only contributed to the government’s coffers, but they also shaped the very taste and popularity of bourbon to this day.

Editor’s Note: This article was edited to add a missing period.

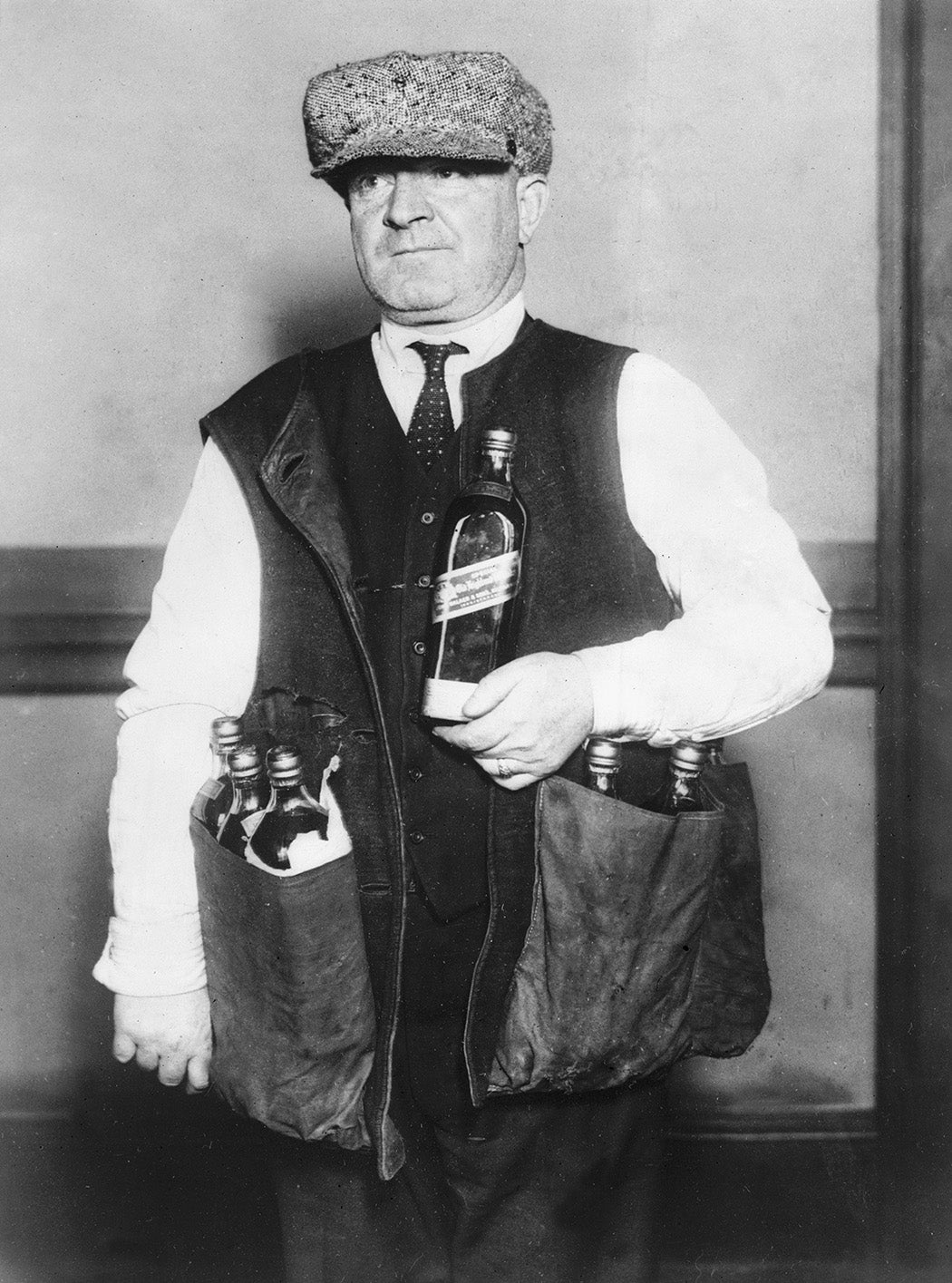

The Caves in Which Moonshine Was Made

Support JSTOR Daily! Join our new membership program on Patreon today.