Germany, 1972. Almost thirty years had passed since the end of World War II and the partition of the defeated nation into separate and unequal states. Beginning in 1961, its former capital was divided by the embodiment of the Cold War, the Berlin Wall. It was one country that had become two, a fulcrum of the ideological, economic, and military struggle between East and West, capitalism and communism, that largely defined the second half of the 20th century. But in the early 1970s, another global struggle—the modern environmental movement—was growing, and within that movement, a quiet revolution was about to start in the halls of the West German parliament.

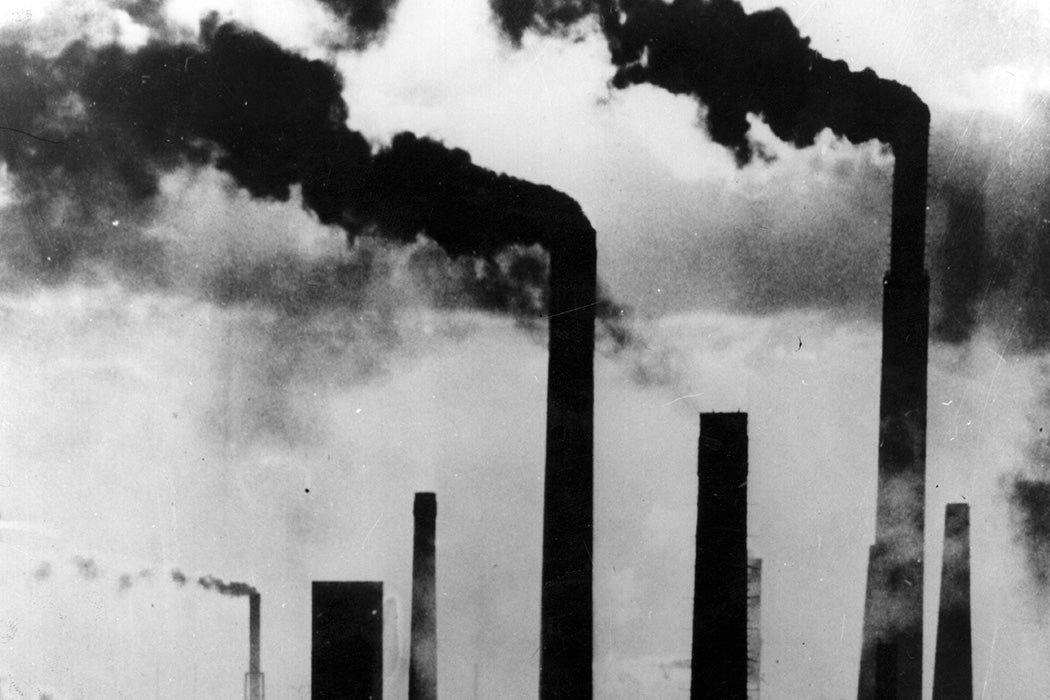

Pollution was nothing new. Humanity had been dumping the waste of its industry into the air, water, and soil for as long as industry had existed, but by the late 1960s, the consequences of that destruction could no longer be ignored. Rivers caught fire, smog made city air unbreathable, and industrialized areas faced the new threat of acid rain. Around the world, this led both to a new wave of environmental activism, and to laws like the U.S. Clean Air and Water Acts.

The Birth of the Precautionary Principle

In 1972, West Germany amended its constitution to address the sulfur dioxide and nitrogen oxide pollution that caused acid rain, which was, in turn, causing widespread damage to German forests. Soon, that amendment worked its way into a federal statute named Bundes-Immissionsschutzgesetz. It had two stated goals: “to protect people, animals, plants, and other things from harmful environmental effects” and “to take precautions against the occurrence of harmful environmental effects.” That second part, the three simple words “to take precautions,” changed everything.

Weekly Newsletter

At first, West German standards for air pollution were actually weaker than those in the United States and other industrialized countries. In 1982, these standards permitted the emissions of almost twice as much sulfur dioxide, and three times as much particulate pollution, as U.S. regulators allowed. But behind the scenes, the precautionary principle, known in German as Vorsorgeprinzip, was becoming more central to regulation in West Germany. Whereas other nations limited their regulations to lists of specific chemicals with strong evidence of damage to human or environmental health, German lawmakers and regulators started to apply the standards to whole classes of chemicals—such as acid rain-causing nitrogen oxides—even those where the science had not yet proven harm.

Distilled to its most basic idea, the precautionary principle is a kind of Hippocratic Oath for the planet. Just as doctors swear to “first, do no harm,” regulators under a precautionary system would err on the side of caution. In fact, one of the classic examples of the success of precautionary action is in medicine, and predates the codification of the principle itself by over a century.

John Snow and the Broad Street Pump

In the first half of the 19th century, the world found itself fighting one of the first truly global pandemics: Cholera. Though outbreaks had occurred for centuries, the explosive spread of the disease through Asia, Europe, and the Americas, helped along by modern trade routes and the global reach of the British military, was unprecedented. Hundreds of thousands died in three separate pandemics between 1817 and 1860. Ships flying a yellow flag were avoided, even by pirates, as that was the symbol of infection.

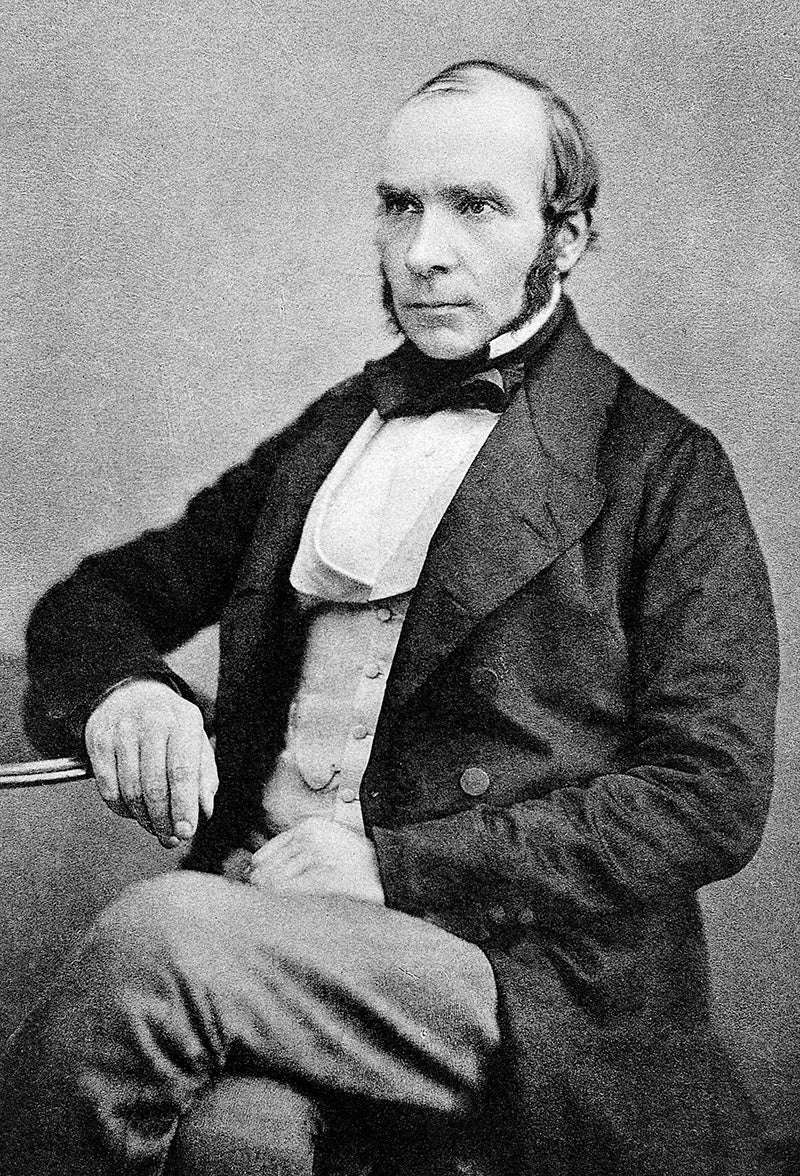

In one of the most famous true detective stories of all time, a physician named John Snow meticulously tracked a cholera outbreak in 1854 London. At the time, doctors had no idea what caused cholera or how it spread. A leading theory blamed miasma, or bad air. Snow’s work suggested that the London outbreak was centered around one single source: a water pump on Broad Street. Water, not air, seemed to be carrying the deadly disease. His recommendation was simple and effective: take the handle off the pump.

Snow’s work is seen as the beginning of epidemiology as a science, and also an early case of effective action before scientific certainty. Snow lacked knowledge of the specific pathway of the disease, or even that it was caused by a bacterium at all. That would come decades later. What Snow had was a pattern, and that was enough to shut off the pump as a precaution, which then proved effective.

The Principle Goes Global

Soon after its modern emergence in Germany, the principle spread across Europe and into the wider world. In 1990, the Bergen Ministerial Declaration on Sustainable Development became one of the earliest international agreements to put the principle clearly into its text, in not only a purely environmental but also an economic frame. It stated: “Where there are threats of serious or irreversible environmental damage, lack of full scientific certainty should not be used as a reason for postponing measures to prevent environmental degradation.” This language made its way into dozens of important treaties and agreements. In the two years after Bergen, the following excerpts show its rapid spread:

“…preventing the release into the environment of substances which may cause harm to humans or the environment without waiting for scientific proof regarding such harm–” – Bamako Convention, 1991

“…where there is a threat of significant reduction or loss of biological diversity, lack of full scientific certainty should not be used as a reason for postponing measures to avoid or minimize such a threat–” – Biodiversity Convention, 1992

“…where there are reasonable grounds for concern…even when there is no conclusive evidence of a causal relationship between the inputs and the effect–” – OSPAR Convention on protection of marine life, 1992

“…even when there is no conclusive evidence of a causal relationship between inputs and their alleged effect–” – Baltic Sea Convention, 1992

In 1992, more than 150 countries adopted the Rio Declaration, the biggest environmental agreement in history to that point, and the basis for many of the pollution and climate treaties that have followed. Its text includes a statement that “the precautionary approach shall be widely applied to states according to their capabilities.”

As soon as the precautionary principle started to take hold, the industries it directly challenged began to push back. It was one thing to ban the handful of chemicals that had been strongly proven to cause harm. Many of those might be phased out over time anyway, to avoid costly lawsuits and public relations disasters. But to limit pollution as a whole, to step ahead of the slow process of testing and require industry to prove a chemical was safe, rather than environmentalists to prove it wasn’t—that was an existential threat to entire industries.

Precautionary Principle vs. Cost-Benefit Analysis

In the United States, United Kingdom, and other nations, political and judicial opponents rallied around Cost-Benefit Analysis (CBA) as an alternative to the precautionary principle. Professor, legal scholar, and author Cass Sunstein became one of the most powerful voices in this camp, writing Laws of Fear: Beyond the Precautionary Principle, as well as numerous journal articles on the topic. In one “Cost-Benefit Analysis and the Environment,” from 2005, he writes that “the Precautionary Principle approaches incoherence,” and that CBA is superior because it is a process driven by data and real consequences, rather than fear of future harm.

Sunstein is not an easy opponent to dismiss. He is the second most cited legal scholar of all time, the author of over 40 books, a Harvard professor, and former administrator of the Office of Information and Regulatory Affairs under President Obama. Neither a consistent progressive nor conservative, he is best known as a judicial minimalist, who believes that courts, the Supreme Court included, should rule on specific cases but not make wider policy with their judgements. When he questions the logical basis for the precautionary principle, his arguments are worthy of careful consideration.

This debate, precautionary principle vs. cost-benefit analysis, plays out in every polluting—or potentially polluting—industry on Earth, but has found one of its most controversial and divisive subjects over the past few decades in the fight over genetically modified organisms, or GMOs.

Beyond the Binary: GMOs

When it comes to GMOs, the Atlantic Ocean is a border between two very different worlds. In the United States, agriculture has become dominated by modified strains, to the point that 92 percent of U.S.-grown corn is genetically modified, along with similar shares of soybeans, cotton, and a few other crops. There are actually a surprisingly small number of GMO crops in American fields, but they make up a massive piece of the overall agricultural pie. Europe, precautionary as ever, has been much slower to adopt these strains. The biggest GMO corn producer there is Spain, whose crop is still only 20 percent GMO. With a wide range of consequences from international hunger relief to pollinator biodiversity to drinking water quality, this is one of those issues that quickly translates differences in policy theory into sweeping real world effects.

Of course, the world is far more than just the U.S. and Europe. As outlined by Jennifer Clapp in her article “Unplanned Exposure to Genetically Modified Organisms: Divergent Responses in the Global South,” developing nations have a wide range of policies toward GMOs that don’t necessarily fit in the precautionary vs. cost-benefit binary. Each country’s stance is shaped by their economy, their infrastructure, and, Clapp argues, especially by their existing trade and cultural relationships. As urgent as food aid is, even during a famine, it doesn’t erase history.

The Present and Future of the Principle

The United States has taken a path in environmental matters overall much closer to the cost-benefit side than the precautionary principle. Today, many areas with residential neighborhoods—overwhelmingly communities of color—located near chemical plants or refineries experience so-called “cancer clusters.” These patterns of high cancer rates are eerily reminiscent of John Snow and his map of cholera victims, but in a modern American legal framework, it is often difficult to prove to a satisfying legal standard the link between chemical exposure and cancer. Precaution was enough to shut off the Broad Street pump. It’s rarely sufficient to shut down, or even fine, an oil refinery on the Gulf Coast.

As we venture deeper into the 21st century, two overlapping crises would seem to provide the ultimate test of setting present policy to prevent future harm: climate change and species extinction. Many scientists agree that we are solidly now in a new geological period of Earth’s history, the Anthropocene, where human effects on the environment have become dominant forces on the planet. In the case of climate, we have an incomplete but solid understanding of the effects we can expect from warming and sea level rise. On the other hand, we are only beginning to understand the cost of species loss. What numbers can we plug into a cost-benefit analysis for the value of a unique bird or mammal or insect or plant? What is a rainforest worth? Does value, in the sense of monetary cost vs. monetary benefit, even make sense in the context of a species or an ecosystem, or for that matter, the habitability of the planet as a whole?

The question of precautionary principle vs. cost-benefit analysis will not be resolved anytime soon. As with so many binary arguments, like the famous developmental question of nature vs. nurture, the answer is almost certainly a mixture of the two. Even the strongest precautionary proponent would agree we need to question, test, and revise our assumptions about future harm, and even the most ardent anti-precautionary economist will concede that some boxes—chemical, biological, or otherwise—are probably better left unopened. Both concepts are tools, and powerful ones when put to an appropriate task. Whatever the proper balance between the two, to survive in a changing world, where our choices can have vast and sometimes irreversible consequences, “first, do no harm” seems like a promise we could do well to make, and keep, more often.