The terrifying prospect of killer robots has been making headlines lately. Two recent projects from the Defense Advanced Research Projects Agency (DARPA) have sparked fears of machines that can decide whether to engage a target without human oversight. The first project, the Fast Lightweight Autonomy (FLA) program, aims to create drones that can maneuver through complex environments without human input, relying instead on sensor data.

The second project, Collaborative Operations in Denied Environment (CODE), aims to improve the bandwidth and efficiency of air attacks. DARPA aims to create aircraft that can complete a mission despite communications interruptions, needing as little as one human supervisor (as opposed to the usual dedicated pilot and sensor operator and team of analysts). This feat will be accomplished through collaborative autonomy, which allows a group of drones to share and evaluate information. The drones can then assess the situation as a group and compare the conditions to programmed rules of engagement. Alone, both projects are fairly innocuous, but together, they have the potential to create robots that can fly around without human input engaging targets.

These projects prompted Human Rights Watch to release a report called “Mind the Gap: The Lack of Accountability for Killer Robots.” The crux of the report’s argument is accountability. Who will be held accountable for wrongful deaths caused by these killer robots? This argument, however, is based on the notion that we people accountable in war. As Princeton University professor Robert Keohane pointed out in his article Abuse of Power: Assessing Accountability in World Politics: “All doubters have to do is look at the abuses committed in Abu Ghraib prison and the conditions of the U.S. prison in Guantanamo Bay, Cuba, and reconcile it with the fact that no high-level U.S. officials have been held accountable for the policies that enabled or even facilitated these violations of presidential pledges and international law.”

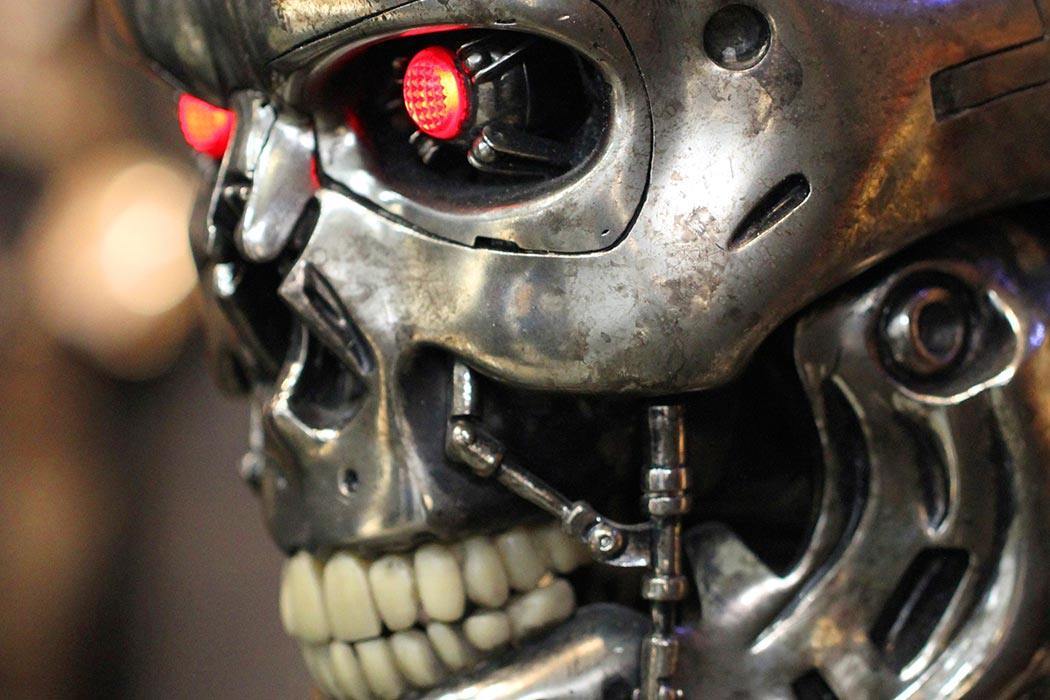

Putting that controversial debate aside, even still there is the logistical difficulty of trying to define “killer robot.” In the broadest sense, a robot is a machine that can complete complex tasks. A stricter definition of robot narrows it further: “a programmable, self-controlled device consisting of electronic, electrical, or mechanical units.” However, even this definition leads us into trouble. Take telephone operators, for example. We used to rely on people to connect our phone calls to the appropriate person, now a machine does that for us. This new system is programmed, self-controlled, and electrical. But calling a modern switch board is a robot just doesn’t feel right. In fact, it is hard to decide what a robot is without relying on imagery from science fiction. Which is fair, since science fiction is where the notion of a robot comes from.

The word robot derives from the fictional word “robota” (translated as “forced labor”), a term invented by Karel Čapek in his play R.U.R. The play depicted robota as human-like: capable of completing human tasks, but soulless. It is a dystopian tale of the rise of robots and humanity’s destruction. The moral of the story is that the human quest for a life of leisure and luxury will result in a greedy society, one that will not work together to save the human race from extinction.

Perhaps the modern idea of a robot is still tied to its literary past. We are far more comfortable labeling human-like machines robots. Could it be that our fear of robots is also linked to literary imagination and not the technical reality?

A further complication is whether killer robots really are autonomous. All robot behavior is rule-based, governed by programming. The CODE robots, for example, rely on military rules of engagement to decide when to engage a target. The robot itself does not make the determination; rather, humans created and carefully crafted the rules that govern the robot’s actions. Although Human Rights Watch argued that the problem was the robots’ autonomy, they were, paradoxically, also concerned that robots would be dangerous because they wouldn’t use human judgment. If this is the true concern, shouldn’t we support more development in human-like artificial intelligence?

In 1978, the danger on the horizon was Space Weapons. Preventing an arms race in space had many of the same challenges as preventing killer robots. It was difficult to define “space,” “peaceful uses,” and “force,” to name a few of the challenging concepts from that debate. Further, it was possible for research in the field to progress without creating a weapon. Preventing space weapons entirely would require preventing advancement of space technologies that could lead to space space weapons, not just preventing weapon development specifically. Similarly, to prevent killer robots, many lines of development in artificial intelligence would need to stop. It is unlikely that we will.

Weekly Digest

It might be beneficial to adopt a suffocation approach, which aims to identify the key technologies needed to weaponize technological developments and prohibit developments in those areas. This could halt serious development efforts aimed at creating killer robots. However, it is possible that it will be too difficult to identify precursor technologies, or that the technology will be too valuable to ban. Therefore, it might be best instead to concentrate our efforts on banning the application of such technologies to warfare.

However, we might also ask: haven’t killer robots existed for some time now? Take guided missiles, for example. The Joint Direct Attack Munition is considered a “smart weapon.” These missiles use the GPS locations of their targets to guide themselves, with a margin of error of 40 feet. This new technology was an improvement over older systems, which relied on heat signatures for marking targets, unreliable in poor weather conditions. This is a programmable, self-controlled, electronic machine that kills people; therefore, it could be considered a killer robot. Yet we aren’t panicked.

The debate is sure to continue. However, what’s most noteworthy is the obvious question that has been carefully been excluded from the discourse. Why is alright for a human to decides to kill another human? It is unlikely that we will make meaningful progress towards stopping the progression of horrific weapons, killer robots capable of torture and destruction, if we are unwilling to examine questions of human dignity, inequality, and power.