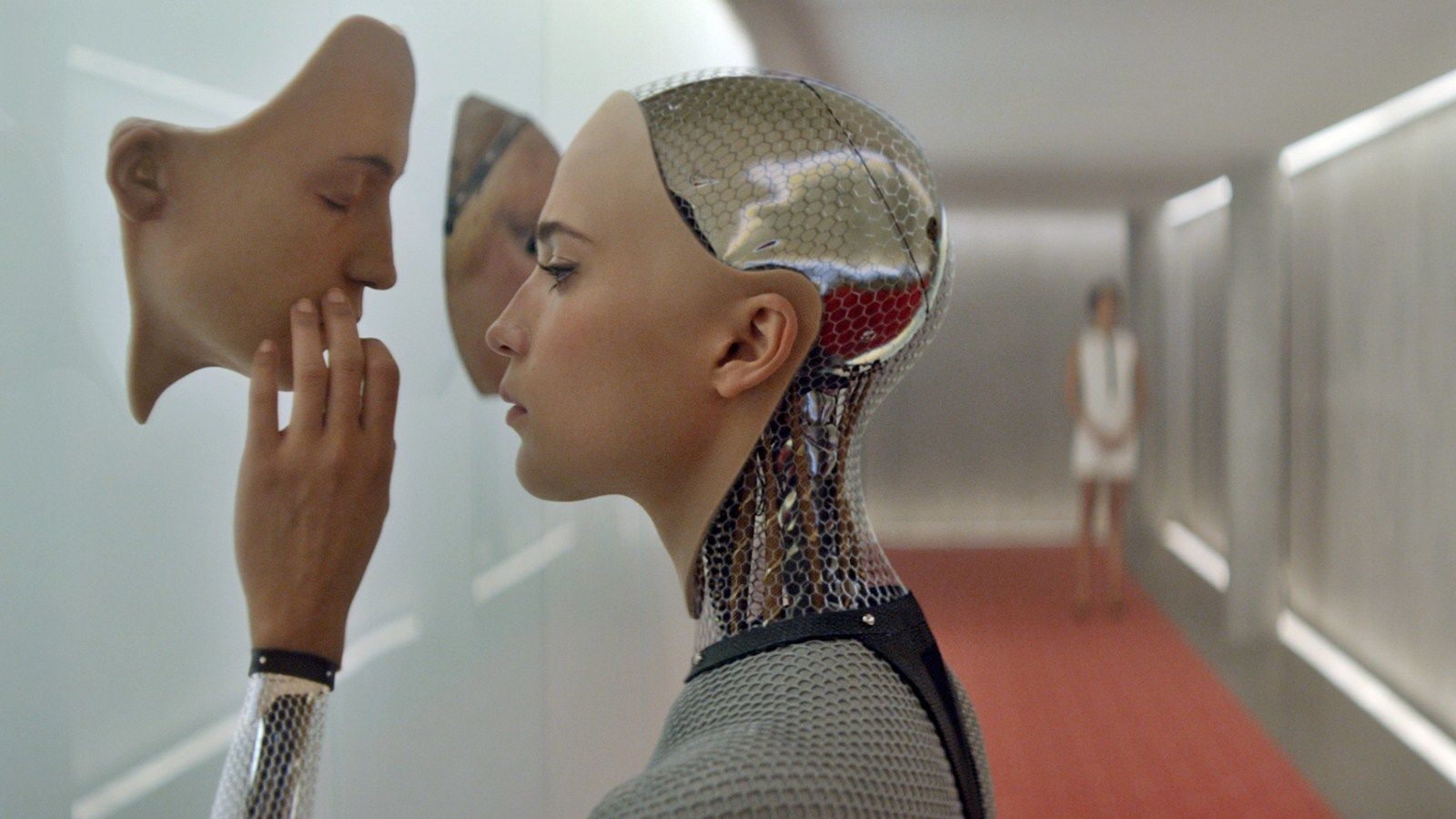

In the recently released Ex Machina, directed by Alex Garland, we see a beautiful but ultimately disturbing future on the horizon. It is one where the notion of artificial intelligence brings up troubling questions of what it really means to be human and perhaps, how we might be fooled by language.

Of course, as humans, we’re fooled all the time by language, whether it’s wielded by the man behind the curtain or even a simple machine, much less a sophisticated AI. Some of us are fooled by automatically generated spam and junk mail, trojan horses and socially engineered malware, fooled by clickbait headlines for a minute or two of our attention spans, all conveyed through language. The clever use of language is often viewed as a short-form way to judge intelligence. We can be easily fooled because we are often willing and cooperative participants in a conversation of curiosity.

In the film, Caleb, a geeky golden ticket winning programmer, is rewarded with a trip to visit his employer in the mysterious middle of nowhere and seems terribly excited to find he’s been chosen as a judge in a Turing Test with an AI android. I myself was once a Turing test judge, for the infamous Loebner Prize, an annual Turing test competition with a $100 000 grand prize that quite a few people might like to win any way they can, and I can assure you that it isn’t all that exciting. It did give me a bird’s eye view of some of the more problematic aspects of the Turing test.

So what even is a Turing test? From films like Ex Machina with its female presenting android Ava, Spike Jonze’s Her, in which a writer falls in love with a female Siri-like entity who calls herself Samantha, from the popularity and personality of Siri ‘herself’, the Turing test has entered the public consciousness as some kind of a test that is passed if a machine successfully fools a human being into believing it is also human. Or maybe as smart as a human. Or maybe even smarter. Essentially, that’s what the Turing test has become. But the Turing test as it stands originally is… oddly specific.

“I propose to consider the question, ‘Can machines think ?” wrote Alan Turing in 1950, in his seminal work Computing Machinery and Intelligence. The Turing test described in the paper is referred to as the Imitation Game, and this is a pretty apt name for the test, because it really is all about deception. Even stranger than that, it seems to be specifically about gender identity deception. So, not just about a computer pretending to be human, but a computer pretending to be a woman. Whoa! Yes, the imitation game, as originally stated by Turing, sets two hidden human interlocutors, a man (A) and a woman (B) against an interrogator of any gender (C). The man pretends to be a woman, lying to the interrogator, and the woman ‘helps’ the interrogator by telling the truth. This acts as a control for when person A is replaced by a machine, which also tries to fool the interrogator into believing it’s a woman. Thus man A and machine A are in a battle of wits to see who can best trick person C into believing they’re female. If the machine passes, well then…

“I believe that in about fifty years’ time it will be possible to programme computers […] to make them play the imitation game so well that an average interrogator will not have more than 70 per cent. chance of making the right identification after five minutes of questioning,” Turing wrote confidently. Fifty years have come and gone and his predictions still haven’t come to pass, though technological singularity advocates are pretty sure it’s just around the corner and computers will be chatting like humans any time now, even if we have to wait for our jetpacks and flying cars. However, Turing’s test setup has opened up a number of fascinating issues to do with deception and how we might receive machines imitating intelligence. And what is up with the gender thing anyway?

It was, I suppose, scientifically rigorous of Alan Turing to set up a first control phase with all human speakers, since both the man and the machine have to pretend to be something they’re not, but why was he so specific about the genders of the interlocutors? Was it due to the sexism of the 1950s, where the conversation of a woman might have been viewed as so simplistic that it could be easily imitated by a machine? Or did Turing have some other idea in mind when he set up the imitation game that way? Could he have anticipated how human behaviour would best respond to a computational entity’s Pygmalion-programmed personality, to give the machine a gaming advantage? Do humans subconsciously feel more comfortable talking to a female entity? Ava, Samantha and Siri might have something to say about that.

Maybe this is also a good time to mention notorious ‘Doctor’ Eliza, an early AI with psychotherapist tendencies created by Joseph Weizenbaum. Weizenbaum reported that even people who knew Eliza was a program treated the program as though it was real: “Secretaries and nontechnical administrative staff thought the machine was a “real” therapist, and spent hours revealing their personal problems to the program. When Weizenbaum informed his secretary that he, of course, had access to the logs of all the conversations, she reacted with outrage at this invasion of her privacy. Weizenbaum was shocked by this and similar incidents to find that such a simple program could so easily deceive a naive user into revealing personal information.”

In 1966, a roving robot therapist, especially a female one, could easily find a legion of ‘naive’ users to fool, but these days it’s getting a bit harder. Probably. But should we really be judgmental about the gullibility of humans having a conversation with a machine when it’s really kind of built into the pragmatics of the way we communicate? According to the well-known Gricean maxims of conversation, there is a cooperative principle at work when we talk to each other, which states rather obliquely “make your conversational contribution such that is required at the stage at which it occurs.”

Unpacked, that means “that conversations are not random, unrelated remarks, but serve purposes. If they are to continue, then they must involve some degree of cooperation and some convergence of purposes. Otherwise, at least one of the parties would have no reason to continue the conversation, and we are presuming that the participants are rational agents,” according to Richard E. Grandy 1989 review of Grice’s work on language. In a way, it means interlocutors who meet the cooperative principle and other conversational maxims even in the most superficial way (like ELIZA) may also satisfy our sense of trustworthiness (or at least worthiness to have a conversation with), says Paul Faulkner in his 2008 paper “Cooperation and trust in conversational exchanges”, perhaps because we think they’re working with us to meet the goals of the conversation.

Looking at the other side of this principle, I would argue that many people, when having a conversation with a stranger, will tend to suspend disbelief and take certain things on trust, cooperating with the conversational flow until such time as they receive fairly clear evidence that they’re not actually talking to another human. For some, that might be quite a low bar and so the more qualities of obfuscation there are to explain away weird linguistic quirks, the easier it is to pull one over ‘naive’ human speakers. That might have been what happened in 2014 when a University of Reading press release reported that the Turing Test had at last been passed by a chatbot called “Eugene Goostman”, simulating a 13 year old Ukrainian boy with, conveniently, imperfect English. The internet exploded a little too soon over that news.

Even with the bizarre gender elements removed, the way the test has been conducted over the years since Turing’s paper was published lays it open to being easily gamed, as Jason Hutchens writes from personal experience in “How to Pass the Turing Test by Cheating.” If you set up a linguistic context and social constraints in which the program has some plausible reason for not speaking a language well enough to seem human (because they’re a child! A foreigner! A repetitive psychotherapist! A woman!), human interlocutors, naive or not, will try and cut you some slack, especially as speakers seem predisposed to cooperate with others in a conversation.

So, if a mere chatbot manages to fool some of the people some of the time, if that is considered ‘passing’ the Turing test, does the Turing test actually mean anything at all for the field of artificial intelligence? Does that follow then that machines can think? Somehow, I don’t think gaming the test to win a competition is exactly what Turing had in mind when he proposed the imitation game.