What is the “backfire effect?” Most citizens “appear to lack factual knowledge about political matters,” write Brendan Nyhan and Jason Reifler. Nyhan and Reifler coined the term “backfire effect” to describe the way that even given evidence against their beliefs, people maintain their beliefs, actually becoming even more strongly convinced of said beliefs.

In their review of the literature on meaningful participation in politics, Nyhan and Reifler note that other researchers have found that “many citizens may base their policy preferences on false, misleading, or unsubstantiated information that they believe to be true.” This misinformation is “related to one’s political preferences.”

Yet surprisingly few researchers have looked into attempts to correct factual ignorance or misconceptions.

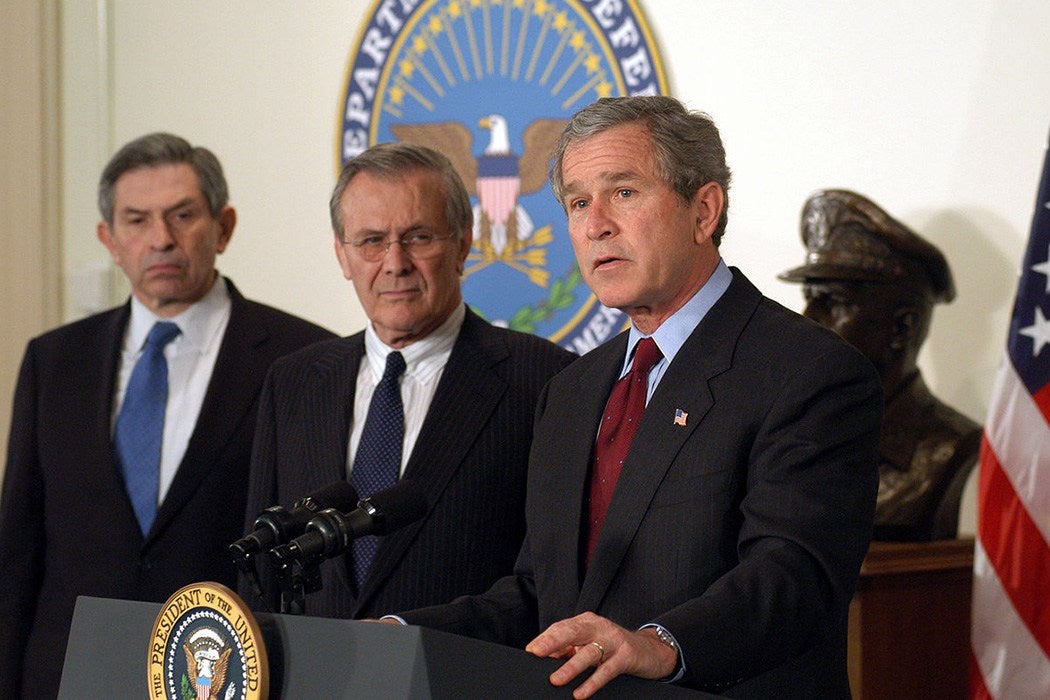

Nyhan and Reifler devised four studies with college undergraduates as their subjects to look precisely at the power of factual corrections. Part of their study used actual quotes on the issue of weapons of mass destruction (WMD) in Iraq in the prelude to the 2003 U.S. invasion, and subsequent corrections. (Iraqi WMD were used as the main pretexts for war by the administration of George W. Bush. None existed.)

Some of the students were given corrections to statements of American officials—fact checks, as we now know them. Nyhan and Reifler found that corrections “frequently fail to reduce misperceptions among the targeted ideological groups.” They also found that that the “backfire effect,” “in which corrections actually increase misperceptions,” was also seen. Some people double-down on their misconceptions after being shown proof that they’re wrong. They become more convinced of their opinion.

Once a Week

Specifically, for very liberal subjects, “the correction worked as expected, making them more likely to disagree with the statement that Iraq had WMD.” For those who called themselves liberal, somewhat left of center, or centrist, there was no statistically significant effect. For conservatives, the corrections backfired: those given “a correction telling them that Iraq did not have WMD were more likely to believe that Iraq had WMD.”

They found that “direct factual contradictions can actually strengthen ideologically grounded factual beliefs—an empirical finding with important theoretical implications.”