Maybe you’ve heard that in a future in which climate change makes it harder to grow crops, we’ll all be dining on sustainable foods formulated from tiny algae or their giant cousin, kelp. Today, we tend to think about these foods as appealingly “natural”—if not naturally appealing. But, as American Studies scholar Warren Belasco writes, when algae-as-food first became a widespread dream in the years after World War II, it was viewed as a marvel, not of nature, but of technology.

By the 1940s, Belasco notes, the world’s population had doubled in just four decades, and it was showing no signs of slowing down. Many feared that agriculture couldn’t produce enough food to feed everyone, and not a few experts turned to ideas that until then had been the domain of science fiction. Some hoped to synthesize carbohydrates, fats, and proteins from coal, petroleum, or even air. Numerous biochemists investigated proposals for artificial photosynthesis, hoping to improve on plants’ methods, which convert less than one percent of sunlight to usable food.

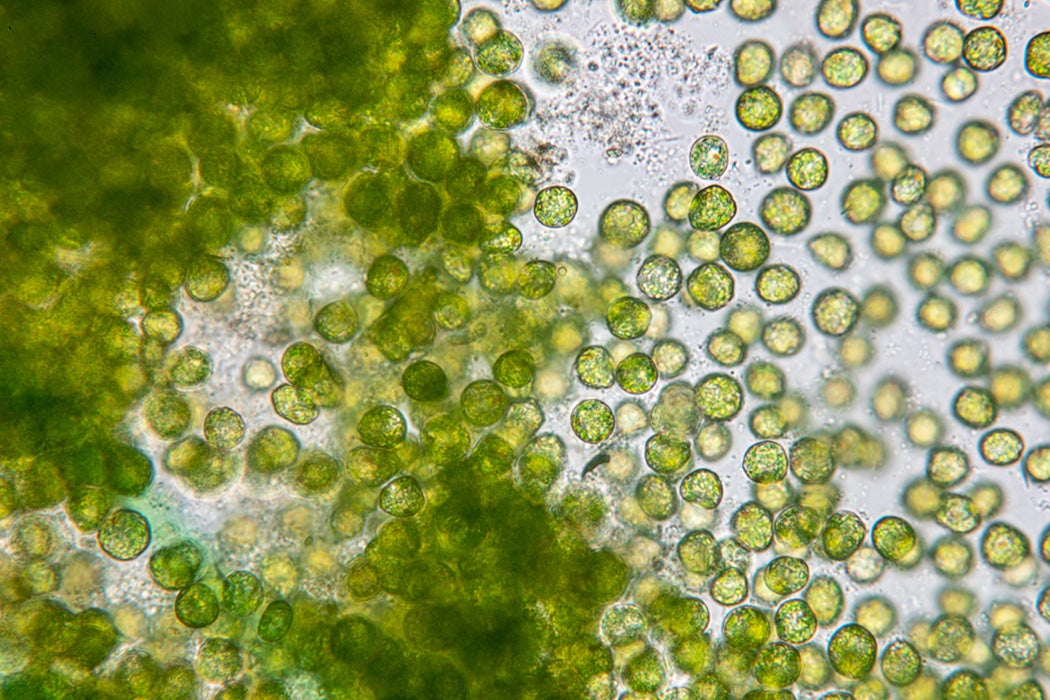

It was in this context that Carnegie Institution-sponsored researchers with the Stanford Research Institute and Arthur D. Little, Inc. embarked on pilot projects growing Chlorella pyrenoidosa. The freshwater algae could convert 25 percent of solar energy into food brimming with protein, calories, and vitamins.

Immediately, Belasco writes, Carnegie scientists began dreaming about mass production of the stuff. They claimed they could produce 17,000 to 40,000 pounds of protein per acre compared with a paltry 500-ish pounds for soybeans. Major media outlets including the New York Times, Fortune, and Newsweek jumped on the idea, running stories that marveled at the idea of turning pond scum into dinner.

One significant problem acknowledged even by its advocates was that algae was pretty disgusting. But many anticipated that food chemists and psychologists could work together to develop satisfactory algae-based foods, such as steaks “made chewy by addition of a suitable plastic matrix,” as proposed by CalTech biologist James Bonner. After all, he suggested, in the future “human beings will place less emotional importance on the gourmet aspects of food and will eat more to support their body chemistry.”

Weekly Newsletter

A flavor panel at Arthur D. Little’s laboratory in Cambridge found that when chlorella was mixed into chicken soup “the stronger, less pleasant ‘notes’ were much reduced and the ‘gag factor’ was not noticeable.”

But it wasn’t just the unpleasant flavor that killed the algae craze. Belasco points out that growing it turned out to be harder than expected. Chlorella had converted 25 percent of light energy to food in a lab, but in uncontrolled sunlit conditions, this dropped to just 2.5 percent—not much better than other crops. And creating ideal growing conditions while keeping out unwanted organisms was tough. Applying technology to supply the world with soybeans and corn turned out to be easier. In addition, the much-feared growth of the world’s population slowed. Over the decades, world hunger dropped dramatically—without the help of algae dinners.