More than a century ago, Belgian information activist Paul Otlet envisioned a universal compilation of knowledge and the technology to make it globally available. He foresaw, in other words, some of the possibilities of today’s Web.

Otlet’s ideas provide an important pivot point in the history of recording knowledge and making it accessible. In classical times, the best-known example of the knowledge enterprise was the Library of Alexandria. This great repository of knowledge was built in the Egyptian city of Alexandria around 300 BCE by Ptolemy I and was destroyed between 48 BCE and 642 CE, supposedly by one or more fires. The size of its holdings is also open to question, but the biggest number that historians cite is 700,000 papyrus scrolls, equivalent to perhaps 100,000 modern books.

The library included a cataloging department, and later scholars created other schemes to sort as well as preserve knowledge. One famous effort was the 18th-century universal Encyclopédie developed by Denis Diderot and Jean Le Rond d’Alembert, along with a classification structure, the “figurative system of human knowledge.” This was highly controversial. It categorized the world’s religions according to ideas of the Enlightenment, illustrating that defining the organization of knowledge can have real-world effects. Despite objections, the Encyclopédie was published between 1751 and 1772 in 28 volumes with supplementary material, for a subscription list of 4,000 names.

Any hope of compacting all we know today into 100,000 books—or 28 encyclopedic volumes—is long gone. The Library of Congress holds 36 million books and printed materials, and many university libraries also hold millions of books. In 2010, the Google Books Library Project examined the world’s leading library catalogs and databases. The project, which scans hard copy books into digital form, estimated that there are 130 million existing individual titles. By 2013, Google had digitized 20 million of them.

This massive conversion of books to bytes is only a small part of the explosion in digital information. Writing in the Financial Times, Stephen Pritchard notes that humanity generated almost 2 trillion gigabytes of varied data in 2011, an amount projected to double every two years, forming a growing trove of Big Data available on about 1 billion websites. But, Pritchard adds, this huge pile of data is unwieldy and unorganized, making it difficult for business executives to extract the information they need.

The data glut affects scientific and scholarly research, too. According to Wendy Hall and her colleagues at the University of Southampton, in the UK, the Web provides a framework for new kinds of research and globally connected projects, yet it “remains a difficult environment in which to create meaningful links. Websites are notoriously difficult to design and maintain, and we rely on search engines to navigate our way around hyperspace…richly connected information environments are still difficult to set up and manage.” And as Katherine Ellison comments in “Too Much Information!” the Web could be made more usable, especially for knowledge workers like writers, “whose jobs demand we plow through the static for useful bytes.”

Search engines let us trek some distance into this world, but other approaches can allow us to explore it more efficiently or deeply. A few have sprung up. Wikipedia, for instance, classifies Web content under subject headings. Users have criticized Twitter, which posts 500 million tweets a day, because they cannot easily find what interests them within the unmediated flood. In response, Twitter has instituted “Moments,” where human editors curate trending topics into slideshows to help orient users.

But there is a bigger question: Can we design an overall approach that would reduce the “static” and allow anyone in the world to rapidly pinpoint and access any desired information? That’s the question Paul Otlet raised and answered—in concept if not in execution. Had he fully succeeded, we might today have a more easily navigable Web.

Otlet, born in Brussels, Belgium, in 1868, was an information science pioneer. In 1895, with lawyer and internationalist Henri La Fontaine, he established the International Institute of Bibliography, which would develop and distribute a universal catalog and classification system. As Boyd Rayward writes in the Journal of Library History, this was “no more and no less than an attempt to obtain bibliographic control over the entire spectrum of recorded knowledge.”

Otlet and La Fontaine published their scheme in 1904 as the Universal Decimal Classification (UDC). It divides all knowledge into nine categories (with a tenth held open for expansion) such as “Linguistics, Literature” and “Mathematics, Natural Sciences,” further broken down into 70,000 subdivisions. These make it possible to classify bibliographic and library materials to a level of fine detail. Updated and translated into 50 languages, the UDC is widely used today in 130 countries.

The UDC offered a grand vision: a center that held all the world’s information in organized and accessible form. In 1910, Otlet and La Fontaine proposed to establish such a “city of knowledge,” which they called the Mundaneum.

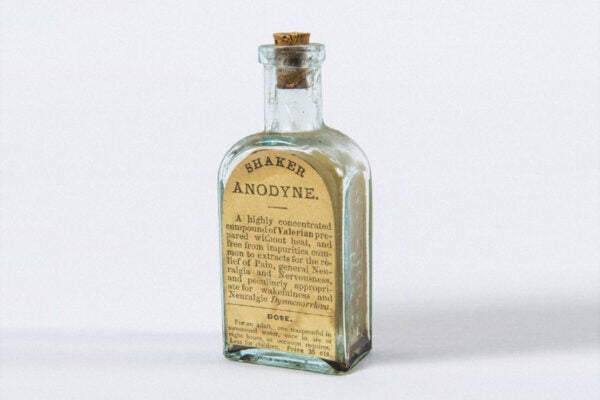

To make the Mundaneum as significant as its founders dreamed, Otlet had to store and access masses of data. Hard copy storage had advanced in 1876, when the American librarian Melvil Dewey published the Dewey Decimal System. He also standardized the paper index cards used in catalogs to their familiar size of three by five inches. Otlet made this card central to his system. Aiming to capture every book ever published, the Mundaneum stored bibliographic data for books under the UDC, along with magazine articles, images, and more on over 15 million cards crammed into catalog drawers.

These catalogs full of paper supported an “ask us anything” research service, where, for a fee, users could telegraph questions into the Mundaneum, but the bulky hard copy format was awkward to copy or share. Otlet tried other approaches. In 1906, with a chemist colleague, he proposed using microphotography to compactly store bibliographic information, documents, and even whole books on microfiche. By 1937, at an international documentation congress, microphotography was lauded as a way to construct a “World Brain.”

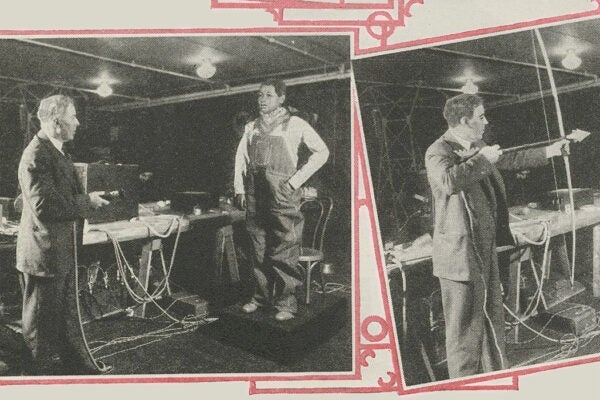

Weekly Newsletter

But Otlet’s true conceptual breakthrough came in his Traité de documentation (1934), which presented “the radiated library and the televised book,” a novel scheme for remote access to data with minimal use of hard copy. As described in histories of the Mundaneum and in the documentary film The Man Who Wanted to Classify the World, Otlet proposed a global “réseau” or network of “electric telescopes.” These early workstations were to be linked to the Mundaneum by telephone and the new technology of television. A user would phone in a query, and the answer in a book or other source would appear displayed on a personal screen, which could be split to show multiple results. The network would support audio output, too, and in a final, startlingly predictive touch, Otlet’s system would also enable data sharing and social interactions among its users.

Otlet’s proposed network was not actually built. Its technology would have limited it, but its principles foreshadow features of today’s Internet. There is, however, a sharp contrast between the centralized Mundaneum information classified by experts, and today’s Web with its “bottom-up” and widely sourced flood of data. Still, Wikipedia’s organizational structure and Twitter’s decision to curate its trending topics show that human classification of information can be useful within a wide-open Web.

Otlet’s approach could have other advantages, too. Writing about the Mundaneum, Alex Wright has noted that, where hyperlinks provide only a “mute bond” between documents, Otlet “envisioned links that carried meaning by, for example, annotating if particular documents agreed or disagreed with each other,” or by mapping out “conceptual relationships between facts and ideas.” Some observers consider these enriched links to be forerunners of the so-called Semantic Web, an idea proposed by Tim Berners-Lee, inventor of the World Wide Web

In 2001 Berners-Lee suggested replacing that Web, “a medium of documents for people,” with the Semantic Web. “By augmenting Web pages with data targeted at computers and by adding documents solely for computers,” he wrote, we could produce instead a medium of “information that can be manipulated automatically.” Wendy Hall and her colleagues point out that this would “let us recruit the right data for a particular use context—for example, opening a calendar and seeing business meetings, travel arrangements, photographs, and financial transactions appropriately placed on a time line.” More grandly, Berners-Lee wrote that “properly designed, the Semantic Web can assist the evolution of human knowledge as a whole.”

These possibilities have yet to be realized because the Semantic Web has not been widely used. Its installation takes considerable human effort, though someday an artificial intelligence might accomplish that. Similarly, Otlet’s network was never tested even at a small scale. The result could have been a different World Wide Web, or a better understanding of the implications of a Web controlled and defined versus one free and unstructured. But that all became moot when the Nazis entered Belgium in 1940. After examining the Mundaneum as part of their program of plundering the cultures they invaded, they dismantled much of what Otlet had gathered; part of it survived, as did Otlet himself until his death in 1944.

Today, besides its echoes in the World Wide and Semantic webs, all that remains of Paul Otlet’s noble experiment in universal access is a small Mundaneum museum in the Belgian city of Mons, where some tiny fraction of all the world’s knowledge still resides on old index cards stored in wooden cabinets.