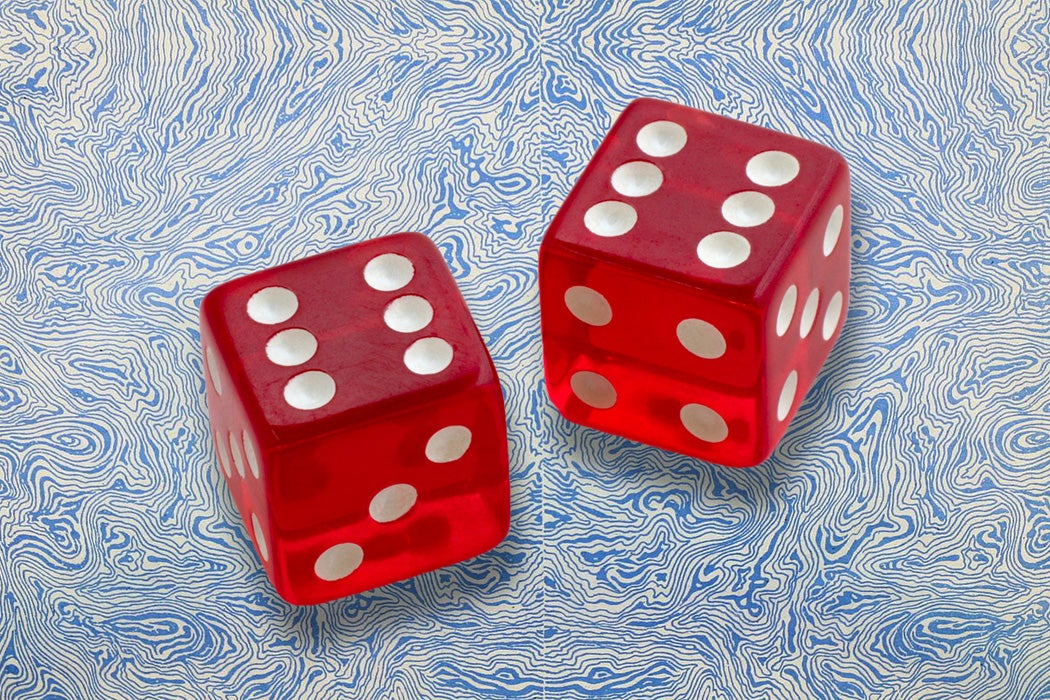

Maybe this has never crossed your mind, but if you have ever tossed dice, whether in a board game or at the gambling table, you have created random numbers—a string of numbers each of which cannot be predicted from the preceding ones. People have been making random numbers in this way for millennia. Early Greeks and Romans played games of chance by tossing the heel bone of a sheep or other animal and seeing which of its four straight sides landed uppermost. Heel bones evolved into the familiar cube-shaped dice with pips that still provide random numbers for gaming and gambling today.

Audio brought to you by curio.io

But now we also have more sophisticated random number generators, the latest of which required a lab full of laser equipment at the U.S. National Institute of Standards and Technology (NIST) in Boulder, CO. It relies on counterintuitive quantum behavior with an assist from relativity theory to make random numbers. This was a notable feat because the NIST team’s numbers were absolutely guaranteed to be random, a result never before achieved.

Even so, you might ask why random numbers are worth so much effort. As the journalist Brian Hayes writes in “Randomness as a Resource,” these numbers may seem no more than “a close relative of chaos” that is already “all too abundant and everpresent.” But random numbers are chaotic for a good cause. They are eminently useful, and not only in gambling. Since random digits appear with equal probabilities, like heads and tails in a coin toss, they guarantee fair outcomes in lotteries, such as those to buy high-value government bonds in the United Kingdom. Precisely because they are unpredictable, they provide enhanced security for the internet and for encrypted messages. And in a nod to their gambling roots, random numbers are essential for the picturesquely named “Monte Carlo” method that can solve otherwise intractable scientific problems.

When you log into a secure internet site like the one your bank maintains, your computer sends a unique code to identify you to the responding server. If that identifier were the same every time you logged on, or were an obvious sequence like 2468 or QRST, a hacker might retrieve it or deduce it, impersonate you online, and take your money. But if the identifier is a random string that is newly created for each user and each online session, it becomes impossible to hack.

Weekly Digest

This idea shows up in two-step verification, when after presenting a site with your password, your phone receives a random multi-digit number that you must enter to complete the login. The same logic applies for an encrypted message. The person who receives it needs the key in order to read the message. This key must be transmitted from sender to receiver, and so is vulnerable to hacking. But if the key for each message is a new random string, that protects messages from being compromised through knowledge of their keys.

However, making random numbers for these or any other purposes is not easy. Supposedly random strings invariably turn out to be flawed because they display patterns. For instance, in 1894, the English statistician W. F. R. Weldon, a founder of biostatistics, tossed dice more than 26,000 times to test statistical theory. And in 1901, the great English physicist Lord Kelvin tried to generate random digits by drawing numbered slips from a bowl. But Kelvin could not mix his slips well enough to make each digit appear with equal probability. Later analysis showed that Weldon’s dice were unbalanced, yielding too many fives and sixes compared to the other numbers.

World War II provided another example of imperfect randomness with real-world consequences. It came from the famous effort at Bletchley Park in England, where cryptographers and mathematicians such as Alan Turing worked to break the German military code. The Germans used the typewriter-like Enigma machine to encode messages, replacing each letter of the alphabet with another letter in a way that changed for each letter in the message, accomplished by a set of rotating wheels that clicked into one of 26 positions labelled A to Z. Before typing a message to be encoded, the operator would randomly choose initial positions for the wheels and send that in code as the key to the coming message. The receiving operator set his Enigma to the same key to correctly decode the message as each incoming letter was typed.

But the operators often became careless or took shortcuts, using the same key for several messages or selecting initial letters near each other on the keyboard. These breaks from randomness, with other clues, helped the Bletchley Park team analyze intercepted Enigma messages and finally break the code, contributing to the Allied effort against the Nazis.

Advanced random number generators were developed later. In 1955, the Rand Corporation published a million random digits from an electronic circuit that, like spins of a virtual roulette wheel, created supposedly unpredictable numbers. But these too displayed subtle patterns. Another approach accompanied the beginnings of digital computation in 1945 with the first general-purpose electronic computer ENIAC (Electronic Numerical Integrator and Computer) at the University of Pennsylvania.

Computerized randomness came from some of the best scientific minds of the era, associated with the Los Alamos Laboratory of the Manhattan Project to build an atomic bomb: Enrico Fermi, a Nobel Laureate for his work in nuclear physics; John von Neumann, considered the leading mathematician of the time; and Stanislaw Ulam, another mathematician who, along with Edward Teller, invented the hydrogen bomb.

In the 1930s, Fermi had realized that certain problems in nuclear physics could be attacked by statistical means rather than by solving extremely difficult equations. Suppose you want to understand how neutrons travel through a sphere of fissionable material like uranium 235. If enough neutrons encounter uranium nuclei and split them to release more neutrons and energy, the result can be a chain reaction that runs away to become an atomic bomb—or that can be controlled to yield manageable power.

Fermi saw that one approach to the problem was to guide an imaginary neutron along its path according to the odds that it would encounter a uranium nucleus. It would then be deflected in a new direction or absorbed by the nucleus to generate more neutrons. If the calculation were done for each of a horde of primary neutrons, the secondary neutrons they produced, and so on, using random numbers to select the initial neutron velocities and the options they encountered as they traveled, the result was a valid picture of the whole process.

In 1947, von Neumann and Ulam began simulating neutron behavior on ENIAC. Von Neumann produced random numbers with a computer algorithm that began with an arbitrary “seed” number and looped to produce successive unpredictable numbers. This “Monte Carlo” approach (a name supposedly suggested by the fact that an uncle of Ulam’s used to borrow money to gamble there) proved successful for the neutron problem. The method became widely applied, and it established the scientific validity of computer simulations for certain types of problems. “Computational physics” is now an important tool for physicists, and Monte Carlo methods contribute in areas as diverse as economic analysis and ornithology.

But as von Neumann knew, the numbers from his algorithm were not really random. They repeated after many iterations, and the same seed always created the same numbers. Von Neumann commented, tongue-in-cheek but also to make a point, that anyone using this procedure, himself included, was in a “state of sin.” He meant that the procedure violates a philosophical truth: a deterministic process, such as a computer program, can never produce an indeterminate outcome. “Random” numbers made by computer are valid in some cases if used with care, but they are better called “pseudo-random.” And it is pseudo-random numbers that appear in security applications. Since hackers could uncover the seed number and the algorithm that uses it, these numbers are predictable and do not give absolute security.

The random numbers made at NIST’s Boulder labs in 2018, however, are not “pseudo” because they come from the inherent indeterminacy of the quantum world. The scientist leading the project, Peter Bierhorst (now at the University of New Orleans), made these numbers by applying the quantum effect called entanglement to photons. A photon is a quantum unit of electromagnetism that carries an electric field. This can point either horizontally or vertically, representing a computer bit with value 1 or 0. Since quantum mechanics is statistical in nature, either direction of the field, called the polarization, or either value 1 or 0, has the same 50 percent probability of appearing when measured.

When two photons are entangled, their states of polarization are linked and are opposite. If the polarization of one photon is measured, whatever the result, the other one, no matter how far away, takes on the opposite value. There is no physical connection between the photons. Strange as entanglement seems, it is a real, purely quantum correlation that is independent of distance.

In Bierhorst’s scheme, a laser created pairs of entangled photons. Each photon in a pair went to one of two separate detectors that recorded its polarization as a 1 or a 0. These detectors were placed 187 meters (nearly two football fields) apart. The fastest possible signal between them, a radio wave moving at the speed of light, would need 0.62 microseconds to cover the distance. This was more than the time it took a detector to register 1 or 0, ensuring that the two sets of measured bits could not be affecting each other by any conventional means.

Nevertheless, the photons were correlated because they were quantum entangled, as the researchers confirmed. Since the bits read from photon A exactly track the bits read from photon B over any distance whatsoever, this suggests the possibility of faster-than-light communication. But relativity forbids such communication, so it must be that the photons arrive at a detector with 1s and 0s in random order, given that each possible value occurs exactly half the time.

The result was the first set of measured binary digits that can be unequivocally certified as random. These unpredictable numbers would supply total security and completely valid statistics if they replaced pseudo-random numbers in applications. NIST plans to integrate the quantum procedure into a random number generator that it maintains for public use, to make it more secure. Meanwhile, Bierhorst is working to reduce the physical size the arrangement needs to guarantee that there is no conventional communication.

Apart from its technological value, this quantum random number generator would have intrigued Einstein. He would probably be pleased that relativity theory contributed to the result; but the process also says something about quantum theory, whose random nature Einstein rejected when he said that God does not play dice with the universe. Maybe not, but humanity has worked out how to make its own superior, quantum-based, and truly random dice.