Slavoj Žižek is a Slovenian philosopher, cultural critic, and Lacanian psychoanalyst. A prolific writer and lecturer, he is best known for his seemingly endless supply of brilliant and provocative insights into contemporary politics and culture.

From his home in Ljubljana, Žižek was kind enough to answer a few of my questions about how we should best understand his ideas. In the wide-ranging style that he has come to be known for, I also learned why he cannot resist a dirty joke, why the task of philosophy is to corrupt the youth, and why the man once dubbed “the most dangerous philosopher in the West” is aiming at something much more modest.

Mike Bulajewski: What is the best text to read to understand your work?

Slavoj Žižek: Although there are a couple of candidates to understand the philosophical background, maybe the first book of my colleague and friend Alenka Zupančič on Lacan and Kant, Ethics of the Real, comes pretty close to the top.

But it depends which type of writing you mean because obviously in the last decade or so I’ve written two types of things: on the one hand, these more philosophical books, usually about Hegel, post-Hegelian thought, Heidegger, the transcendental approach to philosophy, brain sciences, and so on. On the other hand, more politically engaged work. First, I think that my philosophical books are much superior. My more political writings like The Courage of Hopelessness, Against the Double Blackmail, and so on, these are things that I myself don’t fully trust. I think I’m writing them just to say something that I always expect somebody else should have said. Like, why are others who are more professional not doing it?

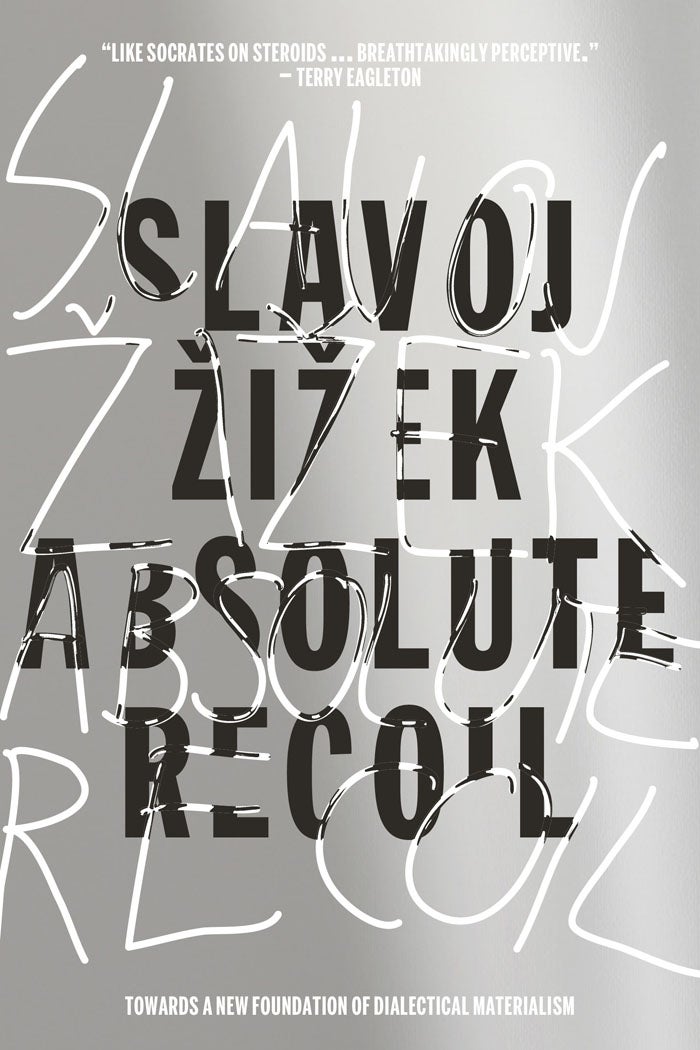

Of philosophical books, I think it’s the one that comes after Less Than Nothing: Absolute Recoil. I think this is the ultimate statement of my philosophical position up until now. Now I’m trying to catch up with that one because I’m always obsessed by the idea that the essential insight skipped me, that I didn’t catch it. My big trauma was finishing that mega book Less Than Nothing. It’s over 1,000 pages, but immediately after finishing it I was afraid I didn’t catch the basic thought, so I tried to do it in Absolute Recoil. But it’s a more difficult book.

What do you think is the most misunderstood concept in your work? Do you think there’s something that we, the readers, don’t want to understand?

It’s not so much a concept as maybe a topic. I think that my philosophical books are not even so widely read, and they are usually misunderstood. What I aim at is a very precise intervention. We are in a certain very interesting philosophical moment where the deconstructive approach, which in different versions was predominant in the last couple of centuries, is gradually disappearing. Then we have—how should I call it—the new positivism, brain sciences, even quantum physics—these scientific ways to reply to philosophical questions. Stephen Hawking said in one of his later books that today, philosophy is dead, science is approaching even basic philosophical questions, and he was right in some sense. Today, if you ask, “Is our universe finite or infinite? Do we have an immortal soul or not? Are we free or not?” People look to answers for these questions in evolutionary biology, brain sciences, quantum physics—not in philosophy.

So, between these two extremes, is there a place for philosophy proper? Not just this deconstructionist, historicist reflection asking, “What is the social context or the discursive context of a work,” but also not a naïve realism, let’s look at reality how it is, and so on. Usually this basic thrust of my work is not understood. So, for me, it is quite comical how I am often for the same work attacked by both sides attributing to me the opposite position. For some Habermasian theorists of discourse, I am a naïve psychoanalytic positivist. For brain scientists, I am a still naïve European metaphysician or whatever.

But now we come to the interesting part. In my political writings, I noticed, the same is happening. You remember, maybe you caught an echo of the ridiculous exchange I had a few months ago with Jordan Peterson. You know what I find so comical there? On the one hand, politically correct, transgender, #MeToo people attacked me for being—I don’t know—anti-politically correct, anti-gay basically, even a Trump supporter, alt-right guy or whatever. Many of them—especially after my criticism of some of the aspects of #MeToo and the transgender movement—see me as an enemy. But the large majority of partisans of Jordan Peterson who reacted to my two short texts attacked me as a pure example of deconstructionism, cultural Marxism, and so on. What was really interesting about their reactions to my work was how, for the same text, I am often attacked by both sides. My old political book, Welcome to the Desert of the Real, I find it quite funny… My Arab friends accused me of being too sympathetic to Zionism and that I am spreading some Zionist lies there and so on. An Egyptian friend told me there was an attack on my book in Al Achram, the main Egyptian daily, almost two decades ago, attacking me as the most perfidious Zionist propaganda. On the other hand, Jerusalem Post, the main center-right daily in Israel, attacked me as the most dangerous version of new anti-Semitism.

So, this is what I find superficially interesting in reactions to my work. I think it is a sad proof that people are not really reading it and following the line of argument, they just look for some short passages and quotes which can be read in their way. But I am not a pessimist because of this. I follow here Jean-Paul Sartre, who said that if, for the same text, you are attacked by both sides, it’s usually one of the few reliable signs that you are in the right.

Is there something symptomatic about that? In the sense that between two sides, pro- and anti-Zionism, they’re trying to reassert the parameters of the discourse or the debate. It’s O.K. to be on either side at some level, but to occupy some kind of third position is most destructive, or controversial, position. Are we locked in these debates?

Yes, these are false debates, that’s my thesis. For example, look at transgender political correctness and #MeToo. You would have thought that the only choice is either morally conservative common-sense criticism of politically correct transgender “excesses” or fully supporting transgenderism. My position here is, of course, not to criticize #MeToo or the transgender position from the right-wing or conservative attitude, but from the progressive way. My reproach to the #MeToo movement is not that they are too crazy, too moralist—no! It is that their moral puritanism and fanaticism is really not radical enough.

For me, advocates of political correctness are of course basically right. Women are oppressed, there is racism, and so on. But the way they approach it doesn’t work. I am not advocating some third space of a compromise. I am saying the way these problems are approached is, in its entirety, both poles, is wrong. Let me give you an example which is often considered problematic. There is a big debate now, and it is a totally justified debate: how do you do dating, seduction, after #MeToo? What are the new rules? As you probably know, I’ve written about it.

One of the rules people try to emphasize is the right to say no at any moment. Like, let’s say you, as a woman, you are seduced, you say yes. Then in the middle of sexual activity you discover your partner is rough, inconsiderate, there’s something vulgar about him or you become aware that your yes was an enforced yes. You have the right to withdraw. But a new form of extremely humiliating violence, not physical violence but mental violence, is opened up if we just follow this rule. Let’s say a guy is seducing a girl. She says yes sincerely; it is not an enforced yes. And when she gets fully excited and so on, all red in the face, the guy says, “Sorry, I have the right to withdraw, I changed my mind.”

My point here is not that sometimes no doesn’t mean no. It always means no. I’m just saying that sexuality is a complex domain with implied meanings, ambiguities. You cannot translate it into rules. And that is my basic reproach to the way I read their proposals. The #MeToo new seduction rules precisely are under the spell of a certain legalism. They think the solution is to explicate the rules. The problem cannot be solved at this level. That’s all that I wanted to say.

I’m not downplaying violence. My obsession—and I think it is a big legacy of critique of ideology of the last centuries, is how a certain rule, slogan or practice which may appear to open up the space for new freedom, emancipation, nonetheless can be misused or has potentially dangerous consequences. As I said viciously at some point, I don’t bring clear answers; I like to complicate things. Usually people say a philosopher when you are confused should bring clarity. I say no! We think we see things clearly in daily life; I long to confuse things.

You mentioned Jordan Peterson, and he is influenced by Carl Jung. Do you have any comments about psychoanalysis in general? Because at least in the United States, we probably are primarily influenced from Anna Freud’s ego psychology? Carl Jung is influential in some ways, partly through the New Age movement…

Don’t underestimate Jung’s influence. In most countries, Freud is seriously debated, but more in literary criticism, maybe in psychology, philosophy, and theoretical circles. But Jung’s work is much more popular. His books are best sellers. For example, after the fall of communism in Russia, ex-Soviet Union, forget about Freud, I was told that Jung was selling hundreds of thousands, even millions of copies, and so on. And of course, here, O.K., we don’t have time to go into detail, but I claim here I am an old-fashioned Stalinist. Jung is a wrong way. Jung is a New Age obscurantist reinscription of Freud. But, you know, in my debate with Jordan Peterson, I totally ignored the Jungian aspect.

Where I see red (as they say) is how Peterson makes a sudden jump from criticism of #MeToo and of transgender politics to his obsession with the terror of cultural Marxism in a totally illegitimate way. All of a sudden he mobilizes one of the basic ideological motifs of new conservatives in Europe, which is that after the fall of Stalin, after the revolution failed in Western Europe in 1920s, some mysterious Communist center decided we cannot destroy the West directly, we must first destroy it morally, its Christian ethical foundations, so through the Frankfurt School and so on, they mobilized the cultural Left. And they see all these phenomena—radical feminism, transgenderism, and so on—as the final outcomes of so-called cultural Marxism and its bent to destroy the West. Now, I consider this a total nonsense which is even factually inaccurate.

It is interesting to reread texts by Horkheimer, Adorno, and other great names of the Frankfurt School. For example, one should reread today Horkheimer’s text from late 30s “Authority and Family,” where Horkheimer does not simply condemn the patriarchal family, but emphasizes how in today’s capitalist society, patriarchal society is very ambiguous. Yes, it is the model of oppression and so on, but at the same time without paternal authority, a child cannot develop an autonomous moral stance which would enable him to gain some kind of ethical autonomy to critically oppose society. So, for Horkheimer and later Adorno, things are clear. A society without paternal authority is a society of youth gangs, of young people who elevate peer values, who are not able to take a critical stance toward society, and so on. It’s ridiculous how inaccurate this image is that paints the terror of cultural Marxism.

My view here is exactly the opposite. What people like Jordan Peterson call cultural Marxism is precisely, as I put it a little bit aggressively, one of the last bourgeois defenses against Marxism. It is falsely radical. I’m not saying that Bernie Sanders is a Marxist. He is just a relative moderate measured by the standards of half a century ago, but he is maybe the first serious American social democrat in the last decades. But did you notice how there were immediate clashes between him and transgender and #MeToo people? Once he said in a famous statement—that’s why I like Bernie Sanders—that it’s not enough for a woman to say, “I’m a Latina, vote for me.” We should also ask, “O.K., nice, but what’s your program?” Just for saying that he was attacked for white supremacism. This is why I think that this so-called cultural Left is one of the main culprits for democratic defeat, the price left-liberals paid for their obsession with these cultural issues. My god, you remember a year and a half ago, if you opened up the New York Times, you’d have thought the main problem is what type of toilet we should have. Then you get Trump!

Can you comment on your style? From various things I’ve heard you say, you’re uncomfortable being treated as an intellectual authority, as a type of a father figure. There’s the dirty jokes, you’ve said you don’t like the formal titles of “Professor Žižek?”

But I take my work seriously. I don’t want to be treated with respect because I think there is always a hidden aggression with respect. At least in my universe, and maybe I live in a wrong universe, this type of respect always subtly implies that you don’t take someone’s work totally seriously. I don’t want to be respected as a person. I don’t care how you call me, Slavoj or an idiot, whatever. I want you to focus on my work.

Here, I am a little bit divided. You know where maybe you can catch me? On the one hand I say that I want you to focus on my work. But in my work and maybe even more so in my speech, there is obviously some kind of compulsion to be amusing and to attract attention. So yes, I have a problem. This is why I more and more like writing and not public speeches and talking. Because in writing, you can focus on what it is all about. And I will tell you something that will surprise you. The best lesson that I had in the last decades is how my philosophical books, which are considered unreadable, too long, too difficult, often sell better than my political books. Isn’t this wonderful?

The lesson is we shouldn’t underestimate the public. It’s not true what some pessimists say, that people are idiots, you should write short books, just reporting or giving practical advice—no. There still is a serious intellectual public around. That’s what gives me hope.

We often expect intellectuals to conform to a certain image of seriousness when they appear in public, and obviously you don’t do those kinds of things, and maybe you even undermine this image. Does that limit your influence?

Yes, I agree with you. It’s a very nice insight. You can say up to a point that what some people take my so-called popularity as basically a subtle argument against me. People say, “He’s funny, go listen to him, but don’t take him too seriously.” And this sometimes hurts me a little bit because people really often ignore what I want to say. For example, the point of madness was reached here—maybe you read it—John Gray’s review of Less Than Nothing in the New York Review of Books. It’s a complex book on Hegel. I made this test with my friends who had only read Gray’s review. I asked them what was their impression of the book? What do I claim in the book? They didn’t have any idea. The review just focuses on some details which are politically problematic, excesses, and so on. But my God, I wrote a book on Hegel. What do I say in it? It is totally ignored. But on the other hand, I think I shouldn’t complain too much here, because, you know, this happens to philosophers. It is happening with Heidegger, with the Frankfurt School, with Lacan, and so on. One simply has to resign to it and accept it. Philosophers are here mostly to be misunderstood.

Does the darkness of your work come through more in your movies, the Pervert’s Guide to…?

I never thought about it, but can I tell you another secret here? Sophie Fiennes was very friendly with me and we did the two movies together, but do you know that I hated making them? It is so traumatic for me to perform in front of a camera, especially to be treated as an actor. Like once I was improvising something for 20 minutes, and then Sophie Fiennes told me, “It was excellent, Slavoj, but there was some problem with the sound. Could you do it again?” She is my friend but at that point I was ready to kill her! You know, who cares about the fucking sound, my God. I was successfully developing a line of thought; I was improvising; I wasn’t acting in the sense of following a script, and now let’s go back and do it again? It was a nightmare.

But the tone comes through. The movies have a certain ominous quality.

You are right here, and you know what is important here? This is maybe one of the existential wagers of my work, to use this fashionable term. To be a radical leftist, you don’t have to be some kind of a stupid optimist, where you believe that, if not for capitalism and oppression, people would be happy, and we are building a new society, and so on. No! In my last theoretical book, Incontinence of the Void, I go openly into how, at a different level, if there will be something like communism, maybe people will be much unhappier. Life will be much more tragic. I don’t think we should confuse happiness and these psychological categories with progressive politics.

Want more stories like this one?

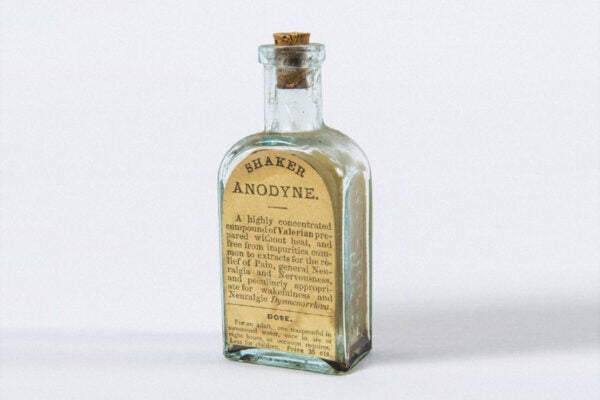

I wrote something published in The Philosophical Salon attacking happiness studies, and I go to the end there. I claim that happiness is not an ethical category; it is a category of compromise. To be happy, you have to be hypocritical and stupid. I’m getting more and more dark here. I think that—if I may use this bombastic category—creativity is something that does not make you happy. It is something very traumatic and painful. It is the same with love: There is no happy love, not in the sense that it always goes wrong, but if you remember how it is being really passionately in love, my God! Your peace of life is ruined; everything is thrown out of balance, and this intensity is what matters. There is nothing happy about it.

You write in Incontinence of the Void: “Only hysteria produces new knowledge, in contrast to the University discourse, which simply reproduces it.” How should we think about this statement in relation to what you are trying to achieve with your work?

I’m not new here. I’m repeating Lacan here, Freud already. The target there, if you remember correctly, is perversion. My old animosity to May ‘68, where the idea is perverts are radical. They even quote Freud, who wrote that hysterics are ambiguous, since they just provoke the master with a secret call for a new, more authentic master, while perverts go to the end. And here I think we should totally change the coordinates. No! Perverts are constitutive of power. Every power needs a secret pervert underground, backside.

But now we have Trump, and Trump is on the side of perversion, no? He seems to revel in transgressing norms.

Up to a point, yes. Who for me is the symptom of Trump? Steve Bannon. If you look at his economic proposals, he says something that is usually attributed to the Left and that no social democracy today dares to do: raise the taxes to 50% for the rich, big public works, and so on. And that’s the general tragedy of our time, that Left moderates have become the cultural Left and the new populist Right is taking over even many of the old social democratic motifs. Social democracy in Europe, even more than in the U.S., is disappearing. That’s why I think people like Bernie Sanders are important.

As some intelligent observers wrote, Sanders succeeded in mobilizing for the Left many people who would have otherwise voted for Trump. But on the other hand, when you ask about Trump as perversion, yes, I agree with you, especially in this sense—here I am a classical moralist. For me the new motto of the Left should be, “We are the Moral Majority.” What I mean is this: Look at Trump’s speech and how the level of public discourse has degraded. Things he says publicly were unthinkable two, three decades ago. And it’s not just the United States. We in Europe are no better. I really think we live in a time of let’s call it ideological regression. History is not necessarily progressive. This is what Hitler did with fascism. Things that were already part of public life but strictly limited to crazy conversation in some small cafeteria where you talk all the obscenities becomes part of public speech. Again, it is happening also in Europe.

O.K., going back to “Only hysteria produces new knowledge…”

Remember what hysteria is? To simplify it, from a psychoanalytic standpoint, society confers on you a certain identity. You are a teacher, professor, woman, mother, feminist, whatever. The basic hysterical gesture is to raise a question and doubt your identity. “You’re saying I’m this, but why am I this? What makes me this?” Feminism begins with this hysterical question. Male patriarchal ideology constrains women to a certain position and identity, and you begin to ask, “But am I really that?” Or to use the old Juliet question from Romeo and Juliet, “Am I that name?” Like, “Why am I that?” So hysteria is this basic doubting of your identity.

People often identified me as a hysteric with my outbursts. O.K., why not? I am hysterical. But you know what gave me hope? Lacan beautifully designated Hegel as “le plus sublime des hysteriques,” the most sublime of all hysterics, because dialectics is precisely this reflexive self-questioning, questioning of every position. That’s why Hegel was aware of the feminine dimension. In his reading of Antigone, he said that woman is the eternal irony of human history, precisely through these ironic questions. I experienced this a couple of times—this is primitive sexual difference, but I think there is a moment of truth here—that when I am talking with a woman, sometimes I get engaged into my reflections and, all of a sudden, I get caught into my own game of taking myself too seriously. And then I see in the woman, my partner in conversation, a kind of a silent mocking gaze—like, “What, are you bullshitting me?” No direct brutal irony, just a silent gaze which destroys you.

Your work has sometimes been criticized as not systematic. Is that intentional? You mentioned previously that, as a philosopher, what you’re trying to do is not to clarify, so much as to problematize.

At the same time, I must say that although I like to use jokes and stories, I do try to put things clearly. But I am here in good company. Take Hegel’s Phenomenology of Spirit, maybe the greatest philosophical work of all time. It was often reproached for the same reason: that it is not clear what Hegel’s position is, that he just seems to jump from one position to another, ironically subverting it, and so on. In a way, from the very beginning, from Socratic questioning, philosophy is this. Without this hysterical questioning of authority, there is no philosophy, which is why, as my friend Alain Badiou recently put it, it is not an accident that Socrates was condemned to death for corrupting the youth. Philosophy has done this from the very beginning. The best definition of philosophy is “corrupting the young,” in the sense of awakening them from an existing dogmatic worldview. This corrupting is more complex today, because constant self-doubt, questioning, and irony is the predominant attitude. Today, official ideology is not telling you, “Be a faithful Christian,” but some sort of post-modernist ideal, “Be true to yourself, change yourself, renovate yourself, doubt everything.” So now the way we corrupt young people is getting more complex.

You are often described as a very provocative thinker, but this seems overstated to me. Do you think you are in fact not provocative at all?

That’s a really nice insight. I really like it. I’m always telling people who claim, “You know you are talking madness, you cannot mean it seriously.” Whenever they say something like that, I immediately explain it in a way which makes it almost common sense, and I’m not saying anything big or revolutionary. I’m saying that today we need a little bit more radical politics, but like, social democracy. I’m very much against all this Nietzschean “against good and evil” ideas. I’m for kind of a common morality. There are no big provocations here.

Even in philosophy, I’m not claiming I’m bringing out something radically new. I’m just trying to explain what I see already there in Hegel. But I will tell you something else I liked in what you said, and that’s my maybe silent hope. Do you know that all big ruptures, or most of them, in the history of thought, occurred as a return to some origins? I always quote Martin Luther. His aim was not to be a revolutionary; his aim was to return to the true Christian message, against the Pope and so on. In this way, he did perform one of the greatest intellectual revolutions where everything changed and so on. I think it is a necessary illusion, paradoxically. To do something really new, maybe the illusion is necessary that you are really just returning to some more authentic past. It must be misperceived as being already there. And I will take the ultimate example here: As many people noticed, it is clear that when Jacques Lacan talks about the return to Freud, well, this Freud is to a large extent something that he rediscovered in Freud, but it is more that he filled in Freud’s gaps and so on. We should never forget that Lacan, who confused everything and brought a revolution to psychoanalysis, perceived himself just as someone who returned to Freud. That’s why I like this idea. For me, true revolutionaries always had a conservative side.

Weekly Digest

I will give you another example which maybe you will like. At the beginning of modernity, all those apparent radicals, empiricists, whatever, they really didn’t get it. One of the guys who really got what modernity is about is Blaise Pascal. But his problem was not, “Let’s break with the past,” but precisely how to remain orthodox Christian in the new conditions of science and modernity. As such, he understood much better what was emerging with modern science than all those scientific empiricist enthusiasts or whatever. What may appear as a conservative move is something that enables you to see things that others may not see.

Another example which has always fascinated me: the passage from silent to sound cinema. Those who resisted it spontaneously—from the Russian avant garde up to Charlie Chaplin—they perceived much more clearly the uncanny dimensions of what was happening there. As I developed in my books, for almost 10 years Chaplin resisted making a full sound movie, no? An early movie to use sound, City Lights, just uses music. Then in Modern Times, you hear sounds and human speech, but it is always sound which is part of the narrative. For example, you hear human speech if it is the voice of a radio shown in the movie. Only with The Great Dictator do you have speaking actors. But who is the agent of sound? Hitler, with this wild shouting. So Chaplin as a conservative saw this threatening, living-dead, destabilizing dimension of the voice, while those idiotic proponents of sound cinema perceived the situation in stupid realist terms, “Fine, now that we have also sound, we can reproduce reality in a more realist convincing way.”

That’s why I don’t like a guy like Ray Kurzweil. In his idea of Singularity, he misses something. It’s so simple, like, yes, we will all become part of the singularity. He doesn’t even address the key questions: How will this change our identity? He kids there. He thinks all these great things will happen, but we will somehow remain the same human beings, just with additional abilities.